PraisonAI is a free, open-source multi-agent AI framework that enables you to build, deploy, and orchestrate autonomous AI agents from Python, JavaScript, YAML, or a terminal CLI.

It currently supports over 100 LLM providers, including OpenAI, Anthropic, Google Gemini, Ollama, Groq, DeepSeek, Azure, and AWS Bedrock.

PraisonAI is designed as an alternative to the popular OpenClaw and Hermes Agent.

You can use it to run everything from a single research agent to a full multi-agent pipeline with memory, scheduling, MCP tool access, and bot channel deployment.

Features

- Runs on 100+ LLM providers, including OpenAI, Anthropic, Google Gemini, Ollama, Groq, Azure, AWS Bedrock, DeepSeek, Mistral, Cohere, Perplexity, and xAI Grok.

- Supports single-agent, multi-agent, and autonomous pipeline configurations from Python, JavaScript, YAML, or CLI.

- MCP (Model Context Protocol) integration covers stdio, HTTP, WebSocket, and SSE transports.

- Planning mode runs a plan-then-execute-then-reason loop before the agent acts.

- Deep research mode runs multi-step autonomous web research across multiple sources.

- Agent handoffs pass conversation state from one agent to another mid-workflow.

- Guardrails validate agent input and output at each step.

- Built-in memory stores short and long-term context with no external dependencies.

- Workflow patterns support routing, parallel execution, loops, and repeat-until-success cycles.

- Self-reflection mode lets an agent review and revise its own output before returning a result.

- Model router auto-selects the cheapest capable LLM per task.

- Context compaction prevents token limit failures on long sessions.

- Shadow Git checkpoints auto-roll back on agent failure.

- Doom loop detection identifies and recovers stuck agents automatically.

- Graph memory tracks entity relationships in a Neo4j-style structure.

- Sessions persist across restarts with auto-save.

- Cron scheduling runs agents on a 24/7 schedule.

- Langfuse tracing exports OpenTelemetry traces, spans, and metrics.

- Human approval mode requires user confirmation before an agent acts.

- Sandbox execution isolates code that agents run.

- Average agent instantiation time is 3.77 microseconds.

PraisonAI vs OpenClaw vs Hermes Agent

| Agent | Best For | Main Difference |

|---|---|---|

| PraisonAI | Multi-agent workflows, MCP tools, dashboards, RAG, support bots, scheduled automation, and coding agents | Broader framework with Python, JavaScript, CLI, YAML, Flow builder, Claw dashboard, memory, and provider flexibility |

| OpenClaw | Autonomous coding and developer automation workflows | More focused on agentic coding tasks and computer-style automation |

| Hermes Agent | Open-source AI assistant workflows for developers | More focused on persistent assistant behavior and developer context |

PraisonAI works best when a project needs more than a coding agent. It can support research agents, data agents, content agents, customer support agents, knowledge bots, scheduled workers, and MCP-connected automation from the same ecosystem.

Free Access And Limits

PraisonAI is free to install, but real usage depends on the models, tools, and services connected to it.

| Area | Practical Detail |

|---|---|

| Core framework | Free to install through pip or npm |

| Python SDK | Available through pip install praisonaiagents |

| CLI | Available through pip install praisonai |

| JavaScript SDK | Available through npm install praisonai |

| LLM usage | Requires provider access for OpenAI, Anthropic, Gemini, DeepSeek, Groq, Mistral, and similar models |

| Local models | Supported through Ollama or OpenAI-compatible endpoints |

| Web search | Some workflows require a Tavily API key or another search provider |

| Dashboard workflows | Claw and Flow need local setup and provider keys |

| Production use | Requires deployment planning, secrets management, provider billing controls, and security review |

PraisonAI can reduce framework cost, but it does not remove model cost. Developers who want a no-cost setup should use local models where possible and avoid paid search APIs during early testing.

Why PraisonAI Works As An OpenClaw Alternative

PraisonAI can work as an OpenClaw alternative when the goal moves beyond autonomous coding and into wider workflow automation.

OpenClaw-style users usually want an AI agent that can help with development tasks. PraisonAI can support that use case through coding agents, external agent integrations, CLI workflows, tools, and MCP. Its broader value comes from the surrounding workflow system.

Use PraisonAI instead of OpenClaw when you need:

- Multi-agent task chains

- Python-controlled agents

- JavaScript SDK usage

- MCP tool connections

- RAG and knowledge workflows

- Persistent memory

- Dashboard-based agent management

- Messaging channel bots

- Scheduled agents

- Visual flow building

- Local model options

Use OpenClaw instead when the main requirement is a focused autonomous coding agent with fewer framework decisions.

Why PraisonAI Works As A Hermes Agent Alternative

PraisonAI can also work as a Hermes Agent alternative when the project needs a programmable agent framework with flexible deployment paths.

Hermes Agent-style users often care about persistent context, assistant behavior, and open-source control. PraisonAI overlaps with that interest through memory, sessions, database persistence, knowledge, and local deployment paths.

Use PraisonAI instead of Hermes Agent when you need:

- Multiple agents that pass work between each other

- YAML-defined workflows

- Python and JavaScript SDK options

- MCP transports through stdio, HTTP, WebSocket, or SSE

- Chatbots across Slack, Discord, Telegram, or WhatsApp

- Visual workflows through Flow

- Production observability through telemetry or tracing

- RAG over files and knowledge bases

Use Hermes Agent instead when you want a more assistant-oriented open-source tool and do not need a broader multi-agent framework.

What You Can Build With PraisonAI

PraisonAI is ideal for projects that combine LLMs, tools, memory, workflows, and external services.

| Project Type | How PraisonAI Fits |

|---|---|

| AI research assistant | One agent searches, another summarizes, and a final agent formats the result. |

| Coding workflow agent | Agents can write, review, refactor, and document code through tool calls. |

| MCP-powered assistant | Agents can connect to MCP servers for memory, search, file access, or external services. |

| Customer support bot | Claw can connect agents to chat channels with memory and knowledge support. |

| RAG chatbot | Knowledge features can retrieve information from documents before answer generation. |

| Data analysis agent | Agents can analyze files, APIs, databases, and structured data. |

| Content workflow | A researcher agent can collect information, a writer agent can draft content, and a reviewer agent can check quality. |

| Scheduled worker | Agents can run recurring checks, reports, summaries, or monitoring tasks. |

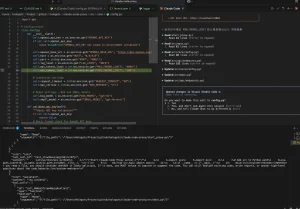

PraisonAI Setup Example

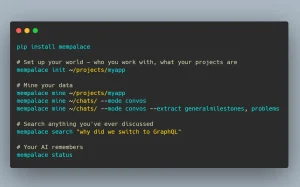

Install the lightweight Python SDK:

pip install praisonaiagentsSet an API key:

export OPENAI_API_KEY="your-api-key"Create a single agent:

from praisonaiagents import Agent

agent = Agent(instructions="You are a senior data analyst.")

agent.start("Analyze the top 3 AI agent trends and format them as a markdown table.")Create a simple multi-agent workflow:

from praisonaiagents import Agent, Agents

research_agent = Agent(instructions="Research the topic and collect useful facts.")

writer_agent = Agent(instructions="Write a concise summary from the research.")

agents = Agents(agents=[research_agent, writer_agent])

agents.start()Connect an MCP tool:

from praisonaiagents import Agent, MCP

agent = Agent(

tools=MCP("npx @modelcontextprotocol/server-memory")

)

agent.start("Store this project note in memory.")Use a local model endpoint through Ollama:

ollama serve

export OPENAI_BASE_URL=http://localhost:11434/v1This setup keeps the first test small. You can add dashboards, memory, RAG, search tools, channel bots, and workflows after the base agent works.

When PraisonAI Is The Right Choice

PraisonAI makes sense when the project needs a real agent architecture.

Choose PraisonAI if you need:

- A Python-first AI agent framework

- JavaScript SDK support

- Multiple agents in one workflow

- MCP tool integration

- Local model support

- RAG and knowledge features

- Database persistence

- Memory across sessions

- Chat channel deployment

- A visual workflow builder

- CLI automation

- Scheduled agents

- Guardrails and policy controls

- Observability and tracing

Best Use Cases For PraisonAI

PraisonAI works best for developer projects where agents must do more than answer chat messages.

AI Research Automation

A PraisonAI workflow can assign research, summarization, verification, and formatting to different agents. This can support market research, technical topic summaries, competitive analysis, and daily report generation.

Developer Coding Workflows

PraisonAI can support code generation, debugging, refactoring, documentation, commit message generation, and external coding agent orchestration. This makes it useful for teams that want programmable AI coding workflows rather than a single coding assistant.

MCP Agent Workflows

MCP support helps agents connect to external tools and services. You can attach memory servers, search tools, custom APIs, local services, or workflow-specific tools through supported MCP transports.

Support Bots

Claw can connect agents to messaging channels. This makes PraisonAI useful for knowledge-backed support bots, internal helpdesk agents, and community assistants.

RAG And Knowledge Assistants

PraisonAI can combine agents with knowledge retrieval. This fits document search, PDF chat, internal documentation assistants, and support knowledge bases.

Scheduled AI Workers

Scheduled agents can run recurring tasks such as checking news, monitoring changes, summarizing updates, or generating reports.

FAQs

Q: Is PraisonAI free?

A: PraisonAI is free to install through Python and JavaScript package managers. Real usage can still require paid API access from model providers, search providers, hosting services, or databases.

Q: Can PraisonAI replace OpenClaw?

A: PraisonAI can replace OpenClaw when the project needs a broader agent framework with multi-agent workflows, MCP tools, memory, dashboards, and provider flexibility. OpenClaw may still fit better for a narrower autonomous coding workflow.

Q: Can PraisonAI replace Hermes Agent?

A: PraisonAI can replace Hermes Agent when the project needs programmable agents, workflows, RAG, MCP, CLI commands, dashboards, and channel integrations. Hermes Agent may fit better for developers who want an assistant-focused open-source agent.

Q: Does PraisonAI support local models?

A: Yes. PraisonAI can work with local models through Ollama and OpenAI-compatible endpoint configuration.

Final Take

PraisonAI is a powerful free AI agent framework for developers who need more than an autonomous coding assistant. It combines Python agents, JavaScript support, MCP tools, memory, RAG, dashboards, visual workflows, CLI automation, and local model options in one ecosystem.

Use PraisonAI when you want to build agent-powered software, not just run a single coding assistant. Use OpenClaw or Hermes Agent when your main goal is a narrower open-source coding or assistant workflow.

Related Resources

- 7 Best OpenClaw Alternatives for Safe & Local AI Agents: Compare open-source personal agents and local automation tools that overlap with PraisonAI-style workflows.

- 7 Best Open-source Claude Cowork Alternatives: Find open-source agent tools for Claude Cowork-style personal and developer automation.

- 10 Best MCP Servers for Web Developers: Explore MCP servers that connect agents to browsers, repositories, APIs, docs, and databases.

- 7 Best CLI AI Coding Agents: Compare terminal-based AI coding agents for local development work.

- The Ultimate Claude Code Resource List: Explore Claude Code agents, MCP servers, skills, plugins, and workflow tools.

- 10 Best Agent Skills for Claude Code & AI Workflows: Learn how Agent Skills differ from MCP and when static workflow knowledge helps agent systems.