OpenMontage is a free, open-source, agentic video production system that turns an AI coding assistant (Claude Code, Codex, etc) into a full-featured AI video studio.

It takes a plain-language prompt and runs the full production chain: research, scripting, asset generation, editing, and final composition.

Most AI video tools animate still images. OpenMontage can also pull real motion footage from Archive.org, NASA, Wikimedia Commons, Pexels, and Pixabay, cut it into a proper timeline, and render a finished piece.

A dedicated documentary montage pipeline handles this path automatically, with no paid video generation API required.

Features

- Runs 15-25+ live web searches across YouTube, Reddit, news sites, and academic sources before writing the script.

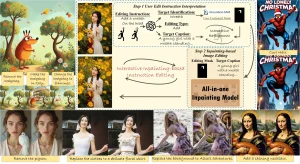

- Selects providers through a 7-dimension scoring engine: task fit (30%), output quality (20%), control features (15%), reliability (15%), cost efficiency (10%), latency (5%), and continuity (5%).

- Logs every creative and technical choice in a persistent audit trail, including alternatives considered, confidence scores, and reasoning, across all production stages.

- Scores incoming requests against a 6-dimension slideshow risk metric to block “animated PowerPoint” outputs before rendering starts.

- Runs post-render self-review after every output: ffprobe validation, frame extraction at 4 positions to detect black frames and broken overlays, audio level analysis, and subtitle verification.

- Accepts a YouTube Short, Reel, TikTok, or local clip as a reference and produces a differentiated production plan with pacing analysis, cost estimates, and a sample before full generation begins.

- Picks between two rendering engines: Remotion for data-driven and image-scene compositions, and HyperFrames for motion-graphics-heavy briefs expressed as HTML and GSAP.

- Supports local GPU video generation and open vLLMs like WAN, Hunyuan, LTX-Video, and CogVideo.

- Applies visual style playbooks (Clean Professional, Flat Motion Graphics, Minimalist Diagram) that control typography, color palettes, motion styles, and audio profiles consistently across all generated assets.

- Exports to platform-specific render profiles, including YouTube (1920×1080), YouTube 4K (3840×2160), Shorts, Instagram Reels, Instagram Feed (1080×1080), TikTok, LinkedIn, and Cinematic (2560×1080 21:9).

- Generates real finished videos with zero API keys: Piper TTS handles offline narration, stock images from Pexels and Pixabay supply visuals, and Remotion animates them into a polished video with transitions, text overlays, and synced captions.

Official Demos

Use Cases

- Create narrated, captioned educational explainer videos on technical topics, with the agent handling live web research and asset sourcing.

- Repurpose a long-form podcast into a batch of ranked short-form clips for social distribution using the Clip Factory pipeline.

- Cut a documentary-style montage from free archival footage on Archive.org, NASA, and Wikimedia Commons.

- Build a product launch teaser or brand film using AI-generated images combined with stock footage, narration, and auto-sourced royalty-free music.

- Translate and dub existing video into multiple languages using the Localization and Dub pipeline.

- Paste a reference video from YouTube or TikTok and receive 2-3 differentiated production concepts with cost estimates before committing to full generation.

- Pass screen recordings through the Screen Demo pipeline to generate product demos and documentation videos.

Get Started

Table Of Contents

Prerequisites

OpenMontage requires the following before setup:

- Python 3.10 or later

- FFmpeg

- Node.js 18 or later

- An AI coding assistant: Claude Code, Cursor, GitHub Copilot, Windsurf, or Codex

Install

git clone https://github.com/calesthio/OpenMontage.git

cd OpenMontage

make setupIf make is unavailable, run the commands manually:

pip install -r requirements.txt && cd remotion-composer && npm install && cd .. && pip install piper-tts && cp .env.example .envOn Windows, if npm install fails with ERR_INVALID_ARG_TYPE, substitute:

npx --yes npm installRun Your First Video

Open the project directory in your AI coding assistant and enter a plain-language prompt:

"Make a 60-second animated explainer about how neural networks learn"The agent picks a pipeline, runs live web research, writes the script, generates or sources assets, renders the video, and self-reviews the output. It pauses for approval at creative decision points before proceeding.

For the real-footage path, prompt specifically:

"Make a 75-second documentary montage about city life in the rain. Use real footage only, no narration, elegiac tone, with music."Run demo videos at any time with no API keys:

make demoCheck Available Capabilities

These commands display which tools and providers are active with your current API key configuration:

python -c "from tools.tool_registry import registry; import json; registry.discover(); print(json.dumps(registry.support_envelope(), indent=2))"

python -c "from tools.tool_registry import registry; import json; registry.discover(); print(json.dumps(registry.provider_menu(), indent=2))"Add API Keys (All Optional)

Keys go into the .env file. Every key is optional:

| Key | Provider | Unlocks |

|---|---|---|

FAL_KEY | fal.ai | FLUX images + Google Veo, Kling, MiniMax video, Recraft images |

PEXELS_API_KEY | Pexels | Free stock footage and images |

PIXABAY_API_KEY | Pixabay | Free stock footage and images |

UNSPLASH_ACCESS_KEY | Unsplash | Free stock images |

SUNO_API_KEY | Suno AI | Full song generation, any genre, up to 8 minutes |

ELEVENLABS_API_KEY | ElevenLabs | Premium TTS, AI music, sound effects |

OPENAI_API_KEY | OpenAI | TTS, DALL-E 3 images |

XAI_API_KEY | xAI | Grok image generation and video generation |

GOOGLE_API_KEY | Imagen 4 images, Google TTS (700+ voices, 50+ languages) | |

HEYGEN_API_KEY | HeyGen | Gateway to VEO, Sora, Runway, and Kling |

RUNWAY_API_KEY | Runway | Runway Gen-4 direct |

Enable Local GPU Video Generation

GPU-equipped machines can run video generation at no cost:

make install-gpuThen add to .env:

VIDEO_GEN_LOCAL_ENABLED=true

VIDEO_GEN_LOCAL_MODEL=wan2.1-1.3bBudget Controls

The system estimates cost before execution. You can configure modes in config.yaml:

| Mode | Behavior |

|---|---|

observe | Tracks spend, takes no action |

warn | Logs overruns |

cap | Enforces a hard spend limit |

The default total budget cap is $10. The per-action approval threshold defaults to $0.50. Both values are configurable.

Reference Video Workflow

Paste a video URL from YouTube, TikTok, Reels, or Shorts into your AI coding assistant alongside a prompt:

"Here's a YouTube Short I love. Make me something like this, but about quantum computing."The agent analyzes the transcript, pacing, scenes, keyframes, and style of the reference. It returns 2-3 production concepts that differ in visual treatment, tone, and approach, along with cost estimates and a sample. Full asset generation starts only after the user picks a direction.

Pipelines

| Pipeline | Output | Best For |

|---|---|---|

| Animated Explainer | Narrated explainer with research, visuals, music | Educational content, tutorials |

| Animation | Motion graphics, kinetic typography | Social media, product demos |

| Avatar Spokesperson | Avatar-driven presenter video | Corporate comms, training |

| Cinematic | Trailer, teaser, mood-driven edits | Brand films, promos |

| Clip Factory | Batch of ranked short-form clips from one source | Repurposing long content |

| Documentary Montage | Thematic montage from stock and archival footage | Video essays, mood pieces, real-footage videos |

| Hybrid | Source footage + AI-generated support visuals | Enhancing existing footage |

| Localization & Dub | Subtitled, dubbed, translated video | Multi-language distribution |

| Podcast Repurpose | Podcast highlights to video | Podcast marketing |

| Screen Demo | Polished screen recordings | Product demos, documentation |

| Talking Head | Footage-led speaker video | Presentations, vlogs, interviews |

Pros

- Generates genuine video from real footage using free stock and open archives.

- Works with zero API keys using Piper TTS, local models, and free stock sources.

- Provides 12 unique production pipelines.

- Supports both cloud APIs and local GPU models.

- Includes reference-driven creation to ground production plans in existing videos.

- Enforces quality gates that prevent slideshow-style outputs and verify render integrity.

- Logs all decisions in an auditable trail for complete transparency.

- Offers built-in budget controls with cost estimation and spend caps.

- Fully open source and self-hostable.

Cons

- Requires Python 3.10+, Node.js 18+, FFmpeg, and an AI coding assistant.

- Orchestration quality depends on the AI coding assistant used.

- The free path works, but premium APIs expand output quality, voice quality, and provider variety.

Related Resources

- OpenMontage Agent Guide: Operating guide and contract for agentic workflows, including pipeline selection and tool usage rules.

- OpenMontage Providers Guide: Full provider list with pricing, free tiers, and setup instructions.

- Piper TTS: The free, offline text-to-speech engine used for zero-key narration.

- Claude Code: Anthropic’s agentic coding assistant, one of the primary AI coding assistants compatible with OpenMontage.

- fal.ai: The image and video generation gateway that connects OpenMontage to FLUX, Kling, Google Veo, MiniMax, and Recraft with a single API key.

FAQs

Q: Do I need to pay for API keys to use OpenMontage?

A: No. The zero-key setup produces real videos using Piper TTS for narration, Pexels and Pixabay for stock images (both offer free developer keys), and Remotion for animated composition with transitions, text overlays, and word-level captions.

Q: What AI coding assistants work with OpenMontage?

A: OpenMontage works with any AI coding assistant that can read files and execute Python. Dedicated configuration files are included for Claude Code (CLAUDE.md), Cursor (CURSOR.md), GitHub Copilot (COPILOT.md), Codex (CODEX.md), and Windsurf (.windsurfrules).

Q: Is OpenMontage producing real video or just animating images?

A: Both paths exist. The Documentary Montage pipeline builds a searchable corpus from real motion footage on Archive.org, NASA, Wikimedia Commons, Pexels, and Pixabay, then edits those clips into a proper timeline. Other pipelines generate AI video clips using providers like Kling, Google Veo, Runway, or local GPU models. The image-based path uses Remotion to animate still images with spring physics and cinematic motion..

Q: How does the reference video feature work?

A: Paste a YouTube, TikTok, Reel, or Shorts URL into your AI coding assistant with a description of the video you want to create. The agent analyzes the reference video’s transcript, pacing, scene structure, and style. It then produces 2-3 differentiated production concepts with cost estimates and a sample output. Full asset generation starts only after you select a direction.