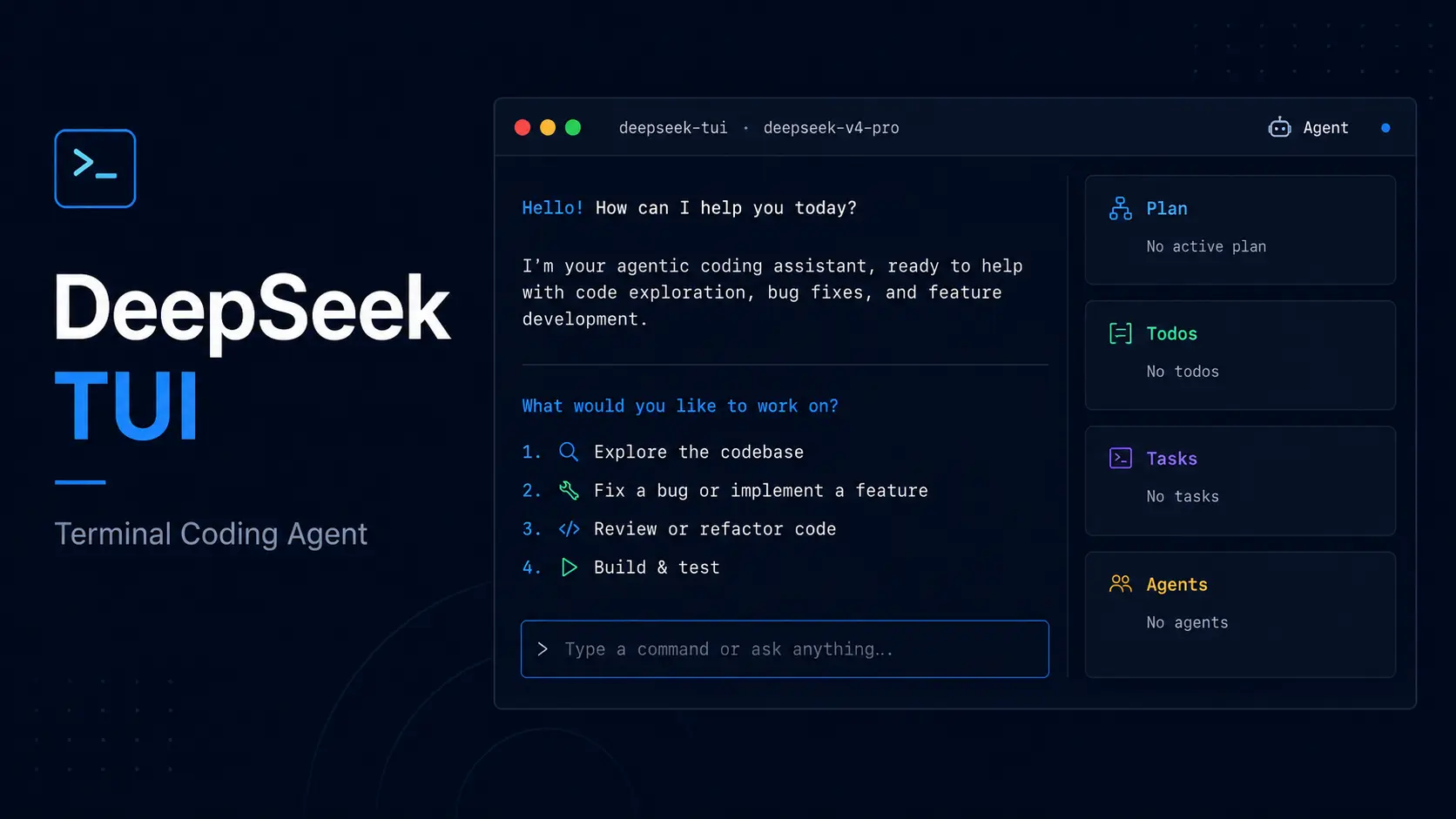

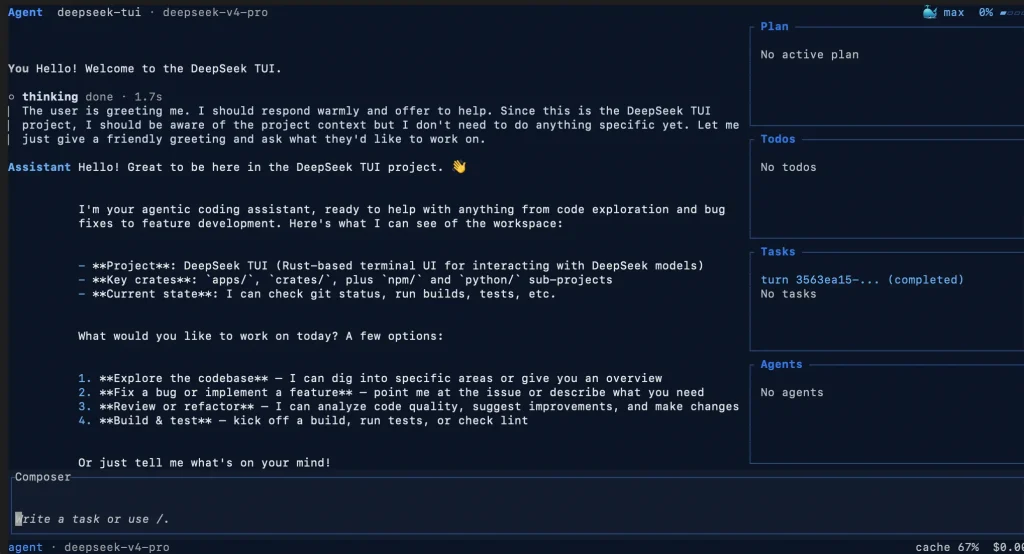

DeepSeek TUI is a free, open-source AI coding agent built on the DeepSeek model family.

Designed as an open Claude Code alternative, the coding agent runs from the deepseek command, reads and edits local files, executes shell commands, searches the web, manages git, and coordinates sub-agents from a keyboard-driven interface in your terminal.

It’s great for developers who want the capabilities of Claude Code or Codex but built specifically around DeepSeek, with pricing that skews toward cheap Flash inference for routine tasks and Pro with extended thinking for harder problems. The auto mode handles that routing for you automatically.

See the latest DeepSeek API Pricing Here.

Features

- Streams DeepSeek reasoning blocks in real time as the model works through each turn.

- Auto mode sends a lightweight pre-turn routing call to select the model and thinking level before the main request runs.

- 3 agent modes: Plan (read-only exploration), Agent (interactive with approval gates), and YOLO (auto-approves all tool calls in trusted workspaces).

- Tool registry: file operations, shell execution, git, web search and browsing, apply-patch, sub-agents, and MCP servers.

- Tracks per-turn and session-level token usage with cache hit/miss breakdown and live cost estimates.

- Sessions checkpoint to disk and resume, fork at a chosen turn, or roll back file changes.

- LSP integration surfaces inline errors and warnings from rust-analyzer, pyright, typescript-language-server, gopls, and clangd after each file edit.

- Reasoning effort cycles through

off - high - maxwith Shift+Tab. - Skills system loads composable instruction packs from the workspace or global directories.

- Supports NVIDIA NIM, Fireworks, SGLang, vLLM, and Ollama as provider alternatives to the DeepSeek API.

- HTTP/SSE runtime API exposes the agent for headless and CI-driven workflows.

- User memory persists preferences across sessions in a flat file injected into the system prompt.

- Durable task queue survives restarts for long-running background work.

- Native RLM runs batched analysis through cheap Flash children in parallel.

Use Cases

- Review a large codebase in Plan mode before any shell or patch action runs.

- Fix a bug from the terminal after attaching the relevant files through

@path. - Run architecture, security, or release work through Auto mode so the agent selects the model and thinking level.

- Connect the MCP servers to extend the terminal agent with extra local tools.

- Expose the DeepSeek engine to a local app through the HTTP and SSE runtime API.

How to Use It

Table Of Contents

Installation

Pick the one that fits your existing toolchain:

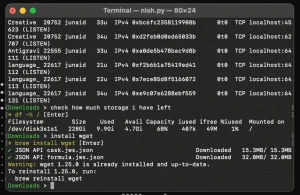

# npm — easiest if Node is already installed; downloads prebuilt Rust binaries from GitHub Releases

npm install -g deepseek-tui

# Cargo — no Node required

cargo install deepseek-tui-cli --locked

cargo install deepseek-tui --locked

# Homebrew (macOS)

brew tap Hmbown/deepseek-tui

brew install deepseek-tui

# Direct binary download

# Prebuilt for Linux x64/ARM64, macOS x64/ARM64, Windows x64

# https://github.com/Hmbown/DeepSeek-TUI/releasesThe npm package is an installer wrapper that downloads the matching Rust binaries and places them on your PATH. Users in mainland China can point npm at https://registry.npmmirror.com or configure a Cargo registry mirror for faster downloads:

# ~/.cargo/config.toml

[source.crates-io]

replace-with = "tuna"

[source.tuna]

registry = "sparse+https://mirrors.tuna.tsinghua.edu.cn/crates.io-index/"Linux ARM64 support (Raspberry Pi, Asahi, Graviton) is available from v0.8.8 onward via npm or the GitHub Releases page. For non-standard targets (musl, riscv64, FreeBSD), build from source using Rust 1.88+.

Authentication

Run the auth command on first setup:

deepseek auth set --provider deepseekThe key saves to ~/.deepseek/config.toml and applies from any directory. The DEEPSEEK_API_KEY environment variable also works, but the saved config key takes precedence and is easier to rotate. Check which credential source is active:

deepseek auth statusRemove a saved key:

deepseek auth clear --provider deepseekVerify the full setup and API connectivity:

deepseek doctor

deepseek doctor --json # machine-readable output for CIStarting a Session

deepseek # interactive TUI

deepseek "explain this function" # one-shot prompt

deepseek --model deepseek-v4-flash "summarize" # explicit model

deepseek --model auto "fix this bug" # auto-select model + thinking

deepseek --yolo # auto-approve all toolsAuto Mode

--model auto sends a small pre-turn call to deepseek-v4-flash before the main request. The router reads the current prompt and recent context, then selects a concrete model (deepseek-v4-flash or deepseek-v4-pro) and thinking level (off, high, or max).

Simple or conversational turns stay on Flash with reasoning off. Coding, debugging, architecture, or ambiguous multi-step work can route to Pro with higher thinking.

The TUI shows the selected route and cost tracking charges against the model that actually ran. Use a fixed model when you need repeatable benchmarking or a strict cost ceiling.

Agent Modes

| Mode | Behavior |

|---|---|

| Plan | Read-only exploration; agent outlines a plan before touching any files |

| Agent | Default interactive mode with approval gates on each tool call |

| YOLO | Auto-approves all tool calls in a trusted workspace |

Cycle modes with Tab or switch at any time with

/model.

Reasoning Effort

Press Shift+Tab to cycle: off → high → max. Off skips extended reasoning entirely. High and max are appropriate for debugging, security review, or tasks where the model needs to reason through ambiguous multi-step problems.

Session Management

deepseek sessions # list saved sessions

deepseek resume --last # resume the most recent session

deepseek resume <SESSION_ID> # resume a specific session by UUID

deepseek fork <SESSION_ID> # fork a session at a chosen turnThe /restore command and revert_turn roll back workspace file changes using side-git snapshots stored under ~/.deepseek/snapshots/. These snapshots never touch your project’s own .git directory.

Full Command Reference

| Command | Purpose |

|---|---|

deepseek | Launch the interactive TUI |

deepseek "prompt" | One-shot prompt |

deepseek --model auto | Auto-select model and thinking level |

deepseek --yolo | Launch in YOLO mode |

deepseek auth set --provider deepseek | Save API key |

deepseek auth status | Show active credential source |

deepseek auth clear --provider deepseek | Remove saved key |

deepseek doctor | Verify setup and API connectivity |

deepseek doctor --json | Machine-readable diagnostics |

deepseek setup --status | Read-only setup status |

deepseek setup --tools --plugins | Scaffold tool and plugin directories |

deepseek models | List available API models |

deepseek sessions | List saved sessions |

deepseek resume --last | Resume the most recent session |

deepseek resume <SESSION_ID> | Resume a specific session |

deepseek fork <SESSION_ID> | Fork a session at a chosen turn |

deepseek serve --http | Start HTTP/SSE API server |

deepseek serve --acp | ACP stdio adapter for Zed and custom agents |

deepseek pr <N> | Fetch a pull request and pre-seed review prompt |

deepseek mcp list | List configured MCP servers |

deepseek mcp validate | Validate MCP config and connectivity |

deepseek mcp-server | Run the dispatcher MCP stdio server |

deepseek update | Check for and apply binary updates |

Keyboard Shortcuts

| Key | Action |

|---|---|

| Tab | Complete / or @ entries; cycle mode when idle |

| Shift+Tab | Cycle reasoning effort: off → high → max |

| F1 | Searchable help overlay |

| Esc | Back / dismiss |

| Ctrl+K | Command palette |

| Ctrl+R | Resume an earlier session |

| Alt+R | Search prompt history and recover cleared drafts |

| Ctrl+S | Stash current draft |

@path | Attach file or directory context in the composer |

Provider Configuration

# NVIDIA NIM

deepseek auth set --provider nvidia-nim --api-key "YOUR_NVIDIA_API_KEY"

deepseek --provider nvidia-nim

# Fireworks

deepseek auth set --provider fireworks --api-key "YOUR_FIREWORKS_API_KEY"

deepseek --provider fireworks --model deepseek-v4-pro

# Self-hosted SGLang

SGLANG_BASE_URL="http://localhost:30000/v1" deepseek --provider sglang --model deepseek-v4-flash

# Self-hosted vLLM

VLLM_BASE_URL="http://localhost:8000/v1" deepseek --provider vllm --model deepseek-v4-flash

# Ollama

ollama pull deepseek-coder:1.3b

deepseek --provider ollama --model deepseek-coder:1.3bKey Environment Variables

| Variable | Purpose |

|---|---|

DEEPSEEK_API_KEY | API key |

DEEPSEEK_BASE_URL | API base URL override |

DEEPSEEK_MODEL | Default model override |

DEEPSEEK_PROVIDER | Provider selection (deepseek, nvidia-nim, fireworks, sglang, vllm, ollama) |

DEEPSEEK_PROFILE | Config profile name |

DEEPSEEK_MEMORY | Set to on to enable user memory |

DEEPSEEK_MAX_SUBAGENTS | Max concurrent sub-agents (clamped to 1–20) |

DEEPSEEK_SANDBOX_MODE | read-only, workspace-write, danger-full-access, external-sandbox |

SGLANG_BASE_URL | Self-hosted SGLang endpoint |

VLLM_BASE_URL | Self-hosted vLLM endpoint |

OLLAMA_BASE_URL | Self-hosted Ollama endpoint |

NO_ANIMATIONS=1 | Force accessibility mode at startup |

SSL_CERT_FILE | Custom CA bundle for corporate proxies |

Config File

User config lives at ~/.deepseek/config.toml. A project-level overlay at <workspace>/.deepseek/config.toml can override non-sensitive settings. The overlay blocks api_key, base_url, provider, and mcp_config_path. Persistent UI preferences such as theme, auto_compact, show_thinking, and locale live in ~/.config/deepseek/settings.toml. Edit them from the TUI with /config or inspect the current state with /settings.

The same config file supports multiple provider profiles. Select a profile with --profile <name> or by setting DEEPSEEK_PROFILE.

Skills

Each skill is a directory with a SKILL.md file. DeepSeek TUI checks workspace directories (.agents/skills, ./skills, .opencode/skills, .claude/skills, .cursor/skills) and global directories (~/.agents/skills, ~/.claude/skills, ~/.deepseek/skills). Install a community skill from GitHub:

/skill install github:<owner>/<repo>Manage skills with /skills (list), /skill <name> (activate), /skill new (scaffold), /skill update, /skill uninstall, and /skill trust.

MCP Integration

Configure the MCP servers in ~/.deepseek/mcp.json. List or validate connected servers at any time:

deepseek mcp list

deepseek mcp validateZed / ACP Integration

Add DeepSeek TUI as a custom agent server in Zed:

{

"agent_servers": {

"DeepSeek": {

"type": "custom",

"command": "deepseek",

"args": ["serve", "--acp"],

"env": {}

}

}

}The first ACP slice supports new sessions and prompt responses. Tool-backed editing and checkpoint replay are not yet exposed through ACP.

Pros

- Free and open source under the MIT license.

- Runs on Linux, macOS, and Windows.

- Four install options: npm, Cargo, Homebrew, and direct binary download.

- ARM64 Linux support.

- 1M-token context window on both models.

- Real-time reasoning block streaming.

- Auto mode selects model and thinking level per turn.

- Per-turn cost tracking with cache hit/miss breakdown.

- Session save, resume, and fork by turn.

- Workspace rollback with side-git snapshots.

- LSP diagnostics after every file edit.

- MCP server support for extended tooling.

- Works with NVIDIA NIM, Fireworks, SGLang, vLLM, and Ollama.

- Skills system for composable, installable instruction packs.

- HTTP/SSE runtime API for headless agent workflows.

Cons

- Requires a DeepSeek API key (or a supported third-party provider key).

- No web or GUI interface.

- Terminal-only operation.

- Prebuilt Windows binaries cover x64 only.

Related Resources

- DeepSeek Platform: API key management and pricing for the DeepSeek V4 model family.

- DeepSeek TUI on GitHub: Source code, releases, issue tracker, and full documentation.

- DeepSeek API Docs: Full API reference including DeepSeek V4 model IDs, context limits, and pricing.

- The Ultimate Claude Code Resource List: A curated list of 100+ free agents, skills, plugins, and more.

- Best Agent Skills: 10 Best Free Agent Skills for Claude Code & AI Workflows.

- Claude Code Slash Commands Cheatsheet: The most complete, verified reference for every Claude Code slash command.

- AI Coding Agents: 7 Best CLI AI Coding Agents.

FAQs

Q: Is DeepSeek TUI free to use?

A: The TUI is free and open source under the MIT license. Running the agent costs money only on the API side. DeepSeek charges per token, with deepseek-v4-flash significantly cheaper than Pro. Prefix caching further reduces input costs for sessions with long stable context.

Q: Does DeepSeek TUI work on Windows?

A: Prebuilt binaries are available for Windows x64 via GitHub Releases, npm, and Scoop. The Scoop manifest updates independently and can lag behind the GitHub and npm releases. Download directly from GitHub Releases or use npm when you need the newest version.

Q: What is the difference between Plan, Agent, and YOLO modes?

A: Plan mode is read-only. The agent explores the workspace, reads relevant files, and proposes a plan before making any changes. Agent mode is the default: the agent runs multi-step tool use and requests approval at each tool call. YOLO mode auto-approves all tool calls in a trusted workspace and skips approval prompts entirely.

Q: What does auto mode actually do?

A: Auto mode sends a small pre-turn call to deepseek-v4-flash before the main request. The router examines the current prompt and recent context, then picks both the model (Flash or Pro) and the reasoning effort level (off, high, or max). Simple turns stay on Flash with no reasoning overhead. Coding tasks, debugging, architecture review, and ambiguous multi-step work can route to Pro with higher thinking. The TUI displays which route ran, and cost tracking charges against the actual model used.

Q: Can I use DeepSeek TUI with a self-hosted model?

A: Yes. DeepSeek TUI supports self-hosted endpoints through SGLang, vLLM, and Ollama. Set the base URL for your local inference server via the corresponding environment variable or config entry, then pass the provider flag at launch. SGLang and vLLM accept any OpenAI-compatible /v1 endpoint.

Q: How does workspace rollback work?

A: DeepSeek TUI creates side-git snapshots before and after each turn. These live under ~/.deepseek/snapshots/ and never interact with your project’s own .git directory. Run /restore or revert_turn to roll back file changes from a specific turn. Sessions can be forked at a chosen turn with deepseek fork <SESSION_ID>.