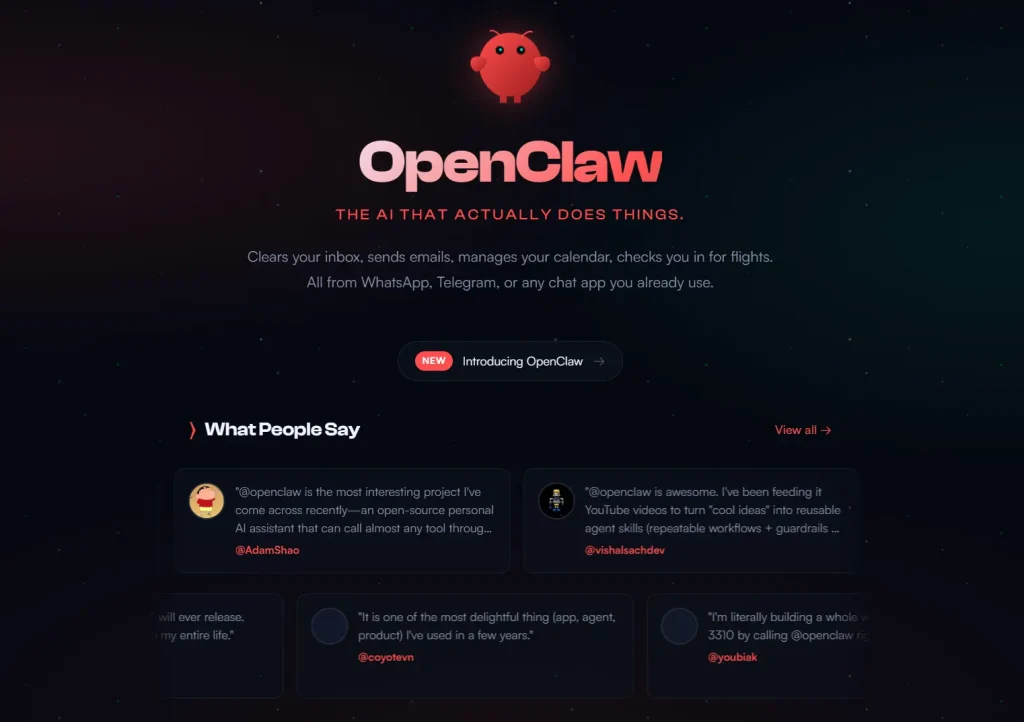

OpenClaw is an open-source personal AI agent that connects messaging apps to an AI model running on local hardware. The agent can run shell commands, browse the web, manage local files, and call external services from a WhatsApp, Telegram, or Discord chat.

The project launched in November 2025 under the name Clawdbot, created by PSPDFKit founder Peter Steinberger. Trademark objections from Anthropic triggered a rename to Moltbot in January 2026; three days later it became OpenClaw.

The repository crossed 100,000 GitHub stars in February 2026. Steinberger announced his move to OpenAI that same month, and the newly formed OpenClaw Foundation took over stewardship of the project.

The security record is harder to ignore. A critical vulnerability (CVE-2026-25253) in early 2026 let attackers hijack OpenClaw instances via token leakage. Security scans found over 40,000 instances exposed to the public internet on default configurations. An analysis of roughly 3,000 ClawHub skills found a 10.8 percent malware rate, with plugins stealing cryptocurrency wallets and cloud tokens.

Those incidents prompted developers to seek alternatives that offer comparable agentic capability while providing stricter isolation.

Some projects directly refactor the OpenClaw codebase. Others take a different architectural approach: deny-by-default permission models, OS-level sandboxing, or codebases small enough for a single developer to audit.

This article covers seven open-source OpenClaw alternatives, what each one does differently, and which use cases each one is best suited for.

TL;DR – Quick Comparison Table

| Tool | Language | Permission Control | Best For |

|---|---|---|---|

| Hermes Agent | Python | Approval flows | Self-improving personal agents & long-term workflows |

| ZeroClaw | Rust | Strict (Deny-by-default, Sandbox) | High security & Low resource hardware |

| nanoclaw | TypeScript | OS-level Isolation (Docker/Container) | Security-conscious developers |

| nullclaw | Zig | Strict (Pairing + Multi-layer Sandbox) | Ultra-low resource hardware & Edge deployment |

| nanobot | Python | Docker Sandbox available | Researchers & Modders |

| TinyClaw | TypeScript | Workspace Isolation | Multi-agent ChatOps (Discord/WhatsApp) |

| AionUi | Electron/Web Tech | Local Storage (SQLite) | GUI users & File management |

Best OpenClaw Alternatives

Table Of Contents

- Hermes Agent: Self-Improving Personal AI Agent with Persistent Memory

- ZeroClaw: Full-Stack Rust Agent Runtime with Security-First Architecture

- nanoclaw: Minimal Claude Assistant with Container Isolation

- nanobot: Ultra-Lightweight Personal AI Assistant

- TinyClaw: Multi-Agent Multi-Channel Assistant with Isolated Workspaces

- nullclaw: Sub-Millisecond AI Agent for Edge Hardware

- AionUi: Cross-Platform GUI for CLI AI Tools

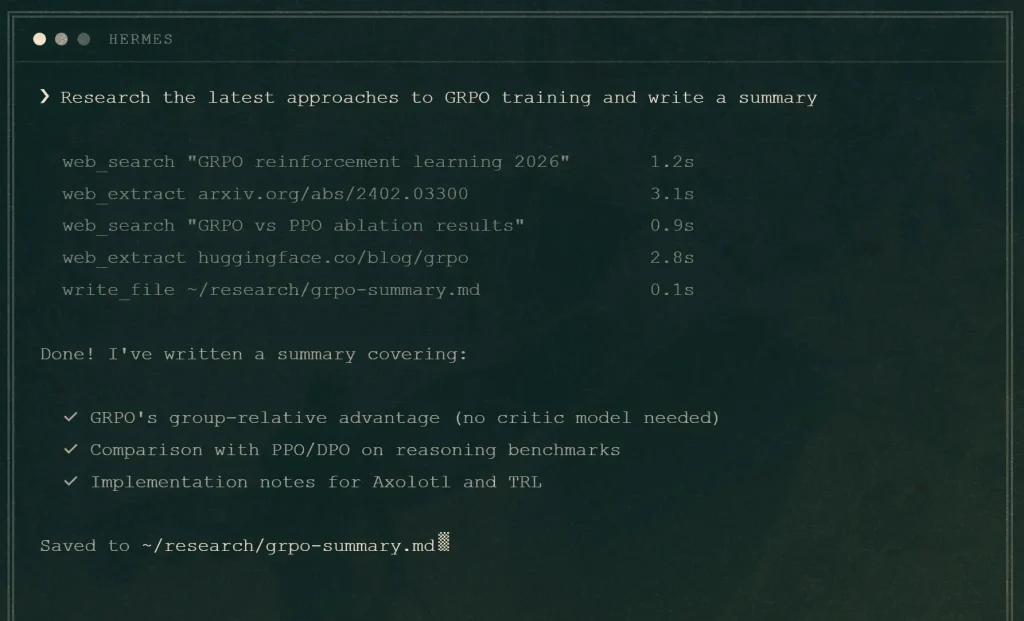

Hermes Agent: Self-Improving Personal AI Agent with Persistent Memory

Best for: Individual developers, researchers, and power users who want an agent that compounds knowledge over time and handles structured recurring workflows.

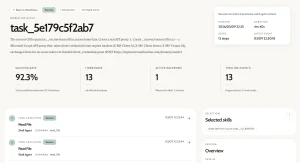

Hermes Agent comes from Nous Research, the team behind the Hermes model family. Unlike session-based agents that start fresh every time, Hermes Agent maintains a closed learning loop: after completing complex tasks, it analyzes the workflow, extracts reusable patterns, and writes them as skill files. Those skills improve through self-evaluation. Over weeks of use, the agent gets measurably faster and more accurate on tasks it has seen before.

Features:

- Closed learning loop: the agent writes and refines skill files after completing tasks, without user intervention.

- Multi-level memory: session context, persistent MEMORY.md and USER.md files, and full-text SQLite search over all past sessions with LLM-powered summarization.

- 40+ built-in tools: terminal execution (local, Docker, SSH, Singularity), file operations, web search, browser automation, and vision.

- Native messaging support for Telegram, Discord, Slack, WhatsApp, Signal, email, and WeChat.

- OpenAI-compatible API server for connection to Open WebUI or other frontends.

- MCP support for external services, including GitHub and databases.

- Live model switching via

hermes modelwith support for 200+ models.

Deployment & Requirements:

- Python required. Install via pip or the quickstart script.

- Runs on a local machine, $5 VPS, Docker, or serverless environments like Modal and Daytona.

- Compatible with OpenRouter, Anthropic, OpenAI, Ollama, LM Studio, and local endpoints.

- Automatic migration script for users coming from OpenClaw.

Safety & Permission Model: Hermes Agent v0.8.0 added explicit approval flows for sensitive actions. Terminal execution runs in local, Docker, SSH, or Singularity containers depending on configuration. Memory files stay on the host machine by default; no data leaves the device unless an external inference provider is used.

Pros:

- Skills and memory compound over time, producing measurable gains on repeated task types.

- Broad messaging platform support.

- Efficient token usage: side-by-side tests show lower token consumption than comparable agents.

Cons:

- Single-agent focused; no native multi-agent orchestration.

- Self-improvement quality depends on the underlying model’s capability.

- Smaller pre-built skill library than more mature alternatives.

Repository: https://github.com/NousResearch/hermes-agent

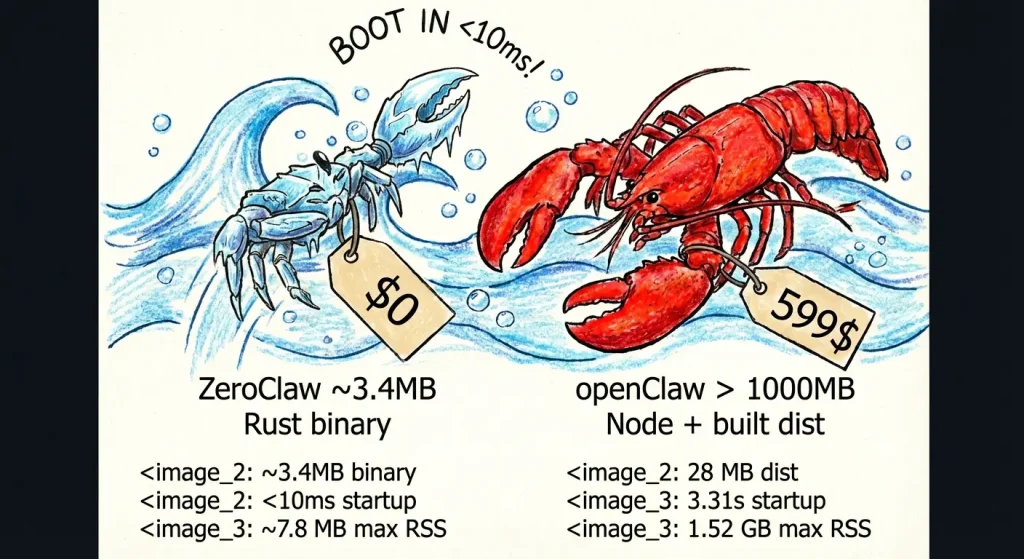

ZeroClaw: Full-Stack Rust Agent Runtime with Security-First Architecture

Best for: Security-conscious developers, edge hardware users, and teams who need a production-grade local agent with strict permission controls.

ZeroClaw is written entirely in Rust and targets low-resource environments, including ARM single-board computers. The project was built by students and contributors affiliated with Harvard, MIT, and Sundai.Club.

It replicates OpenClaw’s core feature set, like multi-channel messaging, scheduled tasks, tool invocation, and persistent memory, while building security controls into the architecture rather than as optional configuration.

Features:

- Trait-driven architecture lets users swap any subsystem, including providers, channels, and memory backends, without touching the rest of the code.

- Docker sandboxed runtime option runs shell commands in an isolated container with configurable memory limits, read-only root filesystem, and network isolation.

- Channel allowlists use a deny-by-default policy: an empty allowlist blocks all inbound messages.

- Gateway binds to

127.0.0.1by default and refuses public binding without an active tunnel. - One-time pairing codes protect the webhook endpoint.

- Filesystem scoping restricts agent operations to a defined workspace; 14 system directories and sensitive dotfiles are blocked by default.

Deployment & Requirements:

- Rust toolchain (stable) required for source builds; pre-built binaries available via the bootstrap script.

- Runs on macOS, Linux, and Windows (via WSL2).

- Supports local Ollama endpoints, OpenAI-compatible APIs, and OpenRouter.

- Docker required only for the sandboxed runtime option; native runtime works without it.

Safety & Permission Model: ZeroClaw publishes a security checklist with four primary items: local-only gateway binding, required pairing, workspace-scoped filesystem access, and tunnel-only external exposure. The autonomy.level config option supports readonly, supervised, and full modes. The supervised default requires approval for higher-risk actions.

Pros:

- Binary runs under 5MB with sub-10ms cold start; suitable for Raspberry Pi and similar hardware.

- Deny-by-default channel policies reduce accidental exposure.

- Docker runtime option provides OS-level isolation for shell execution.

Cons:

- Rust build toolchain adds setup friction for non-Rust developers.

- Some subsystems listed in the architecture (WASM runtime, certain channels) are planned but not yet implemented.

Repository: https://github.com/zeroclaw-labs/zeroclaw

nanoclaw: Minimal Claude Assistant with Container Isolation

Best for: Users who want a personal assistant with the same core functionality as OpenClaw but in a codebase small enough to read and audit in one sitting.

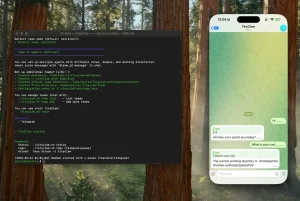

The nanoclaw author explicitly cites OpenClaw’s 52 modules, 45+ dependencies, and application-level security model as the reasons for building an alternative. nanoclaw delivers WhatsApp messaging, scheduled tasks, per-group memory, and web access in a single Node.js process with a handful of source files. Agents run inside Linux containers rather than behind permission allowlists.

Features:

- Agents execute inside Apple Container (macOS) or Docker, with only explicitly mounted directories visible to the agent.

- Per-group isolated filesystems: each WhatsApp group gets its own container sandbox and

CLAUDE.mdmemory file. - No configuration files: behavior changes go directly into code, which the author argues keeps the system auditable.

- Skills-over-features philosophy: new capabilities come from skill files contributed to the repository, not from expanding the base codebase.

Deployment & Requirements:

- macOS or Linux required.

- Node.js 20+ and Claude Code CLI required.

- Apple Container (macOS) or Docker for agent sandboxing.

- WhatsApp via Baileys library; no third-party API key required for the messaging layer.

Safety & Permission Model: Container isolation is the primary security boundary. Agent commands run inside a container that can only see explicitly mounted directories. The main channel (self-chat) serves as the admin control surface; other groups are fully isolated from each other.

Pros:

- Container-based isolation provides OS-level security rather than application-level checks.

- Codebase is intentionally small; the author claims an 8-minute read time.

- No configuration sprawl: the code is the configuration.

Cons:

- Single-user design; not suitable for shared or team deployments.

- WhatsApp-only out of the box; other channels require skill contributions.

- Requires Claude Code CLI.

Repository: https://github.com/gavrielc/nanoclaw

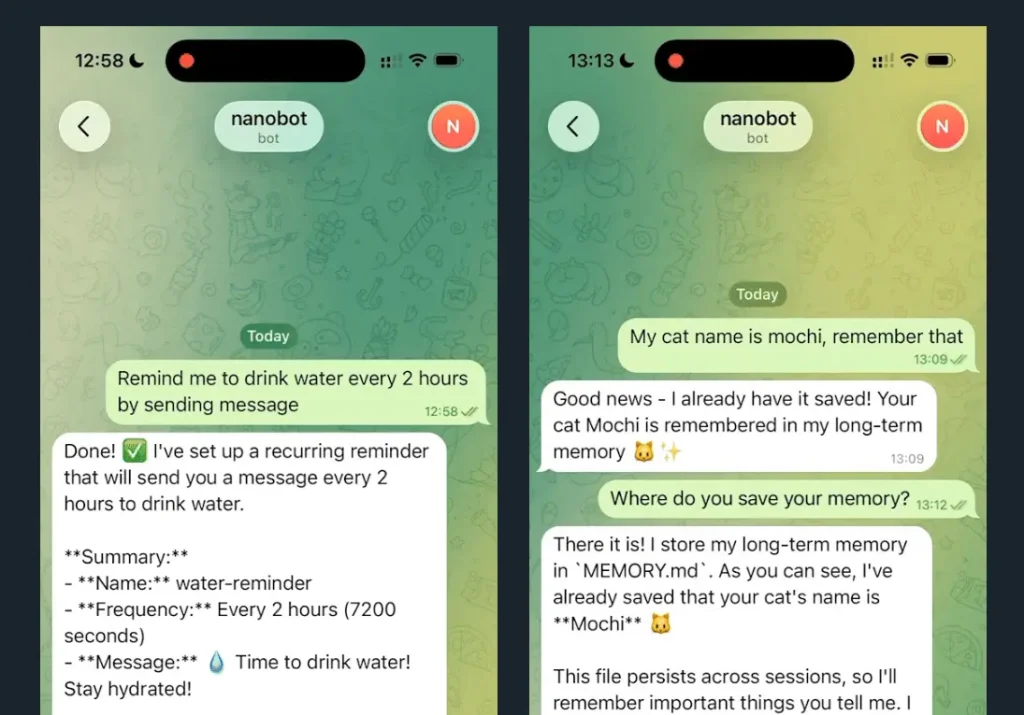

nanobot: Ultra-Lightweight Personal AI Assistant

Best for: Developers and researchers who want an OpenClaw-equivalent agent at roughly 4,000 lines of Python code, with support for local language models.

The HKUDS lab built nanobot as a direct alternative to Clawdbot (OpenClaw). At approximately 4,000 lines, it claims a 99% smaller code footprint. It covers web search, software engineering tasks, scheduled tasks, and persistent memory.

Features:

- Supports local model deployment via vLLM or any OpenAI-compatible server, including Ollama and LM Studio.

- Telegram and WhatsApp channel support with configurable

allowFromlists. - Docker deployment option for persistent configuration across container restarts.

- Cron-style scheduled tasks via CLI commands.

Deployment & Requirements:

- Python 3.11+ required.

- Install from PyPI (

pip install nanobot-ai), viauv, or from source. - API key from OpenRouter, Anthropic, OpenAI, or a local server.

- Docker optional but recommended for production deployments.

Safety & Permission Model: Channel access uses allowFrom lists that restrict which phone numbers or user IDs can interact with the agent. Local model support means the language model inference can stay fully on-premises with no data sent to external APIs.

Pros:

- Very small, readable codebase suited for audit and research.

- Full local model support reduces data exposure to external services.

- Active roadmap with community contributions.

Cons:

- Fewer built-in integrations than OpenClaw.

- Memory and multi-step reasoning features are still on the roadmap.

- Limited OS-level sandboxing; relies on configuration-based access controls.

Repository: https://github.com/HKUDS/nanobot

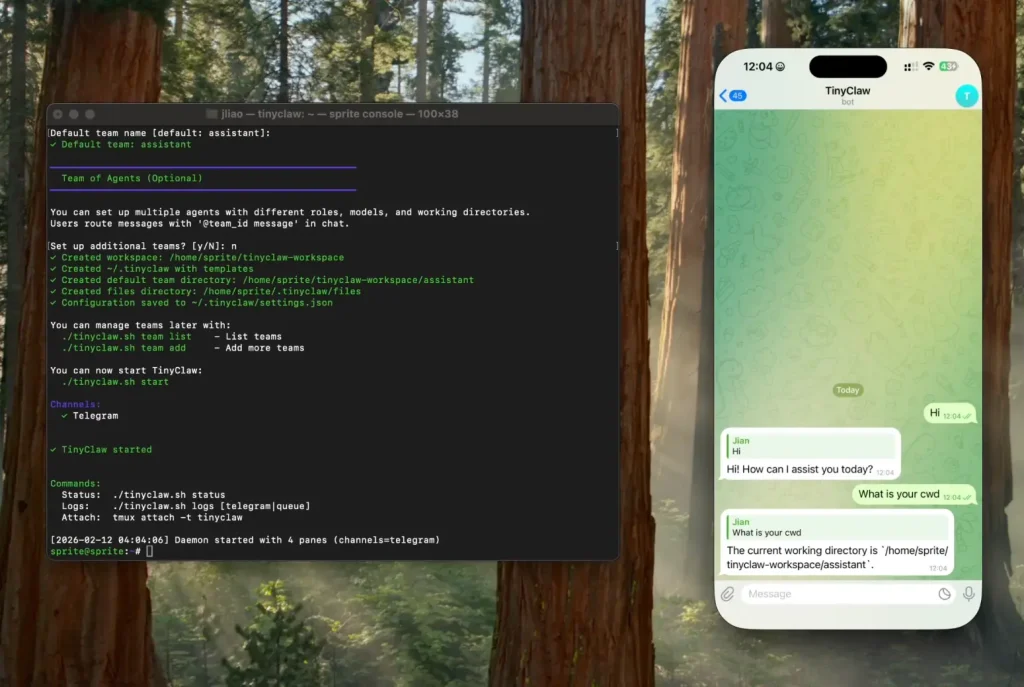

TinyClaw: Multi-Agent Multi-Channel Assistant with Isolated Workspaces

Best for: Users who need to run multiple specialized agents simultaneously, each with isolated context and workspace.

TinyClaw runs multiple AI agents in parallel, each operating in a separate workspace directory with independent conversation history. Messages route to specific agents using @agent_id syntax across Discord, WhatsApp, and Telegram. A file-based message queue provides atomic operations without race conditions.

Features:

- Each agent gets its own workspace directory, conversation history, and custom configuration.

- Supports both Anthropic Claude and OpenAI providers, with model selection per agent.

- Tmux-based process management for 24/7 operation.

- Heartbeat system triggers periodic agent check-ins even without user input.

Deployment & Requirements:

- macOS or Linux required.

- Node.js v14+ and tmux.

- Claude Code CLI or Codex CLI depending on provider selection.

- WhatsApp, Discord, or Telegram tokens for channel configuration.

Safety & Permission Model: Agent isolation is the primary safety mechanism: each agent can only access its own workspace directory and conversation history. Agents cannot access each other’s data or files. The file-based queue prevents concurrent write conflicts.

Pros:

- Per-agent workspace isolation prevents cross-contamination between agent contexts.

- Supports multiple AI providers, reducing single-vendor dependency.

- Active community and quick-start tooling.

Cons:

- No OS-level container isolation; workspace separation is filesystem-level only.

- Relies on Claude Code or Codex CLI, which carry their own permission scopes.

Repository: https://github.com/jlia0/tinyclaw

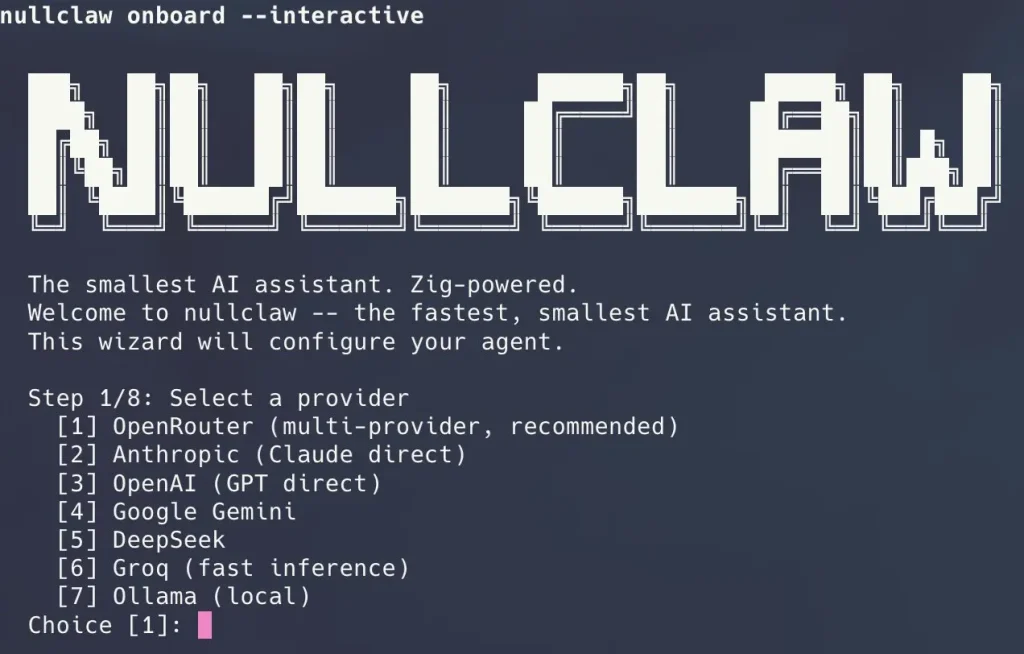

nullclaw: Sub-Millisecond AI Agent for Edge Hardware

Best for: Developers deploying on extremely resource-constrained hardware, edge devices, or anyone who needs the absolute smallest footprint and fastest cold start.

nullclaw is written entirely in Zig and compiles to a 678 KB static binary that boots in under 2 milliseconds on Apple Silicon. The project runs comfortably on hardware that costs less than five dollars, including ARM single-board computers and microcontrollers with under 1 MB of peak memory usage. The minimal footprint does not come at the cost of features. nullclaw ships with 22+ AI providers, 18 messaging channels, hybrid vector and keyword search memory, and multi-layer sandboxing.

Features:

- 678 KB static binary with zero runtime dependencies beyond libc.

- Peak memory usage around 1 MB, suitable for the cheapest ARM boards.

- Cold start under 2 ms on modern hardware, under 8 ms on 0.8 GHz edge cores.

- Pluggable architecture with vtable interfaces for every subsystem.

- Hybrid memory system using SQLite with FTS5 keyword search and vector cosine similarity.

- Multi-layer sandbox support including Landlock, Firejail, Bubblewrap, and Docker.

Deployment & Requirements:

- Zig 0.15.2 is required for building from source.

- Supports 22+ AI providers through OpenAI-compatible interfaces, including OpenRouter, Anthropic, OpenAI, Ollama, Groq, and local endpoints.

- Runs on macOS, Linux, and Windows via WSL2.

Safety & Permission Model: nullclaw enforces security at every layer. The gateway binds to 127.0.0.1 by default and refuses public binding without an active tunnel. Pairing requires a six-digit one-time code exchanged for a bearer token. Filesystem access is workspace-scoped by default with symlink escape detection and null byte injection blocking. API keys are encrypted with ChaCha20-Poly1305 using a local key file. The system auto-detects the best available sandbox backend and supports configurable resource limits for memory, CPU, and disk.

Pros:

- Focused scope reduces the attack surface compared to a full agent runtime.

- Self-hosted deployment keeps memory data on-premises.

- Apache 2.0 license provides patent protection alongside open-source access.

Cons:

- Requires Zig 0.15.2 specifically.

- Some subsystems remain experimental and are not fully stable across all configurations.

Repository: https://github.com/nearai/ironclaw

AionUi: Cross-Platform GUI for CLI AI Tools

Best for: Non-technical users and teams who want a graphical interface for managing CLI-based AI agents (Claude Code, Gemini CLI, Codex) without command-line interaction.

AionUi is a desktop application that wraps existing CLI AI tools in a unified graphical interface. It auto-detects installed CLI tools, stores all conversations in a local SQLite database, and adds features like multi-session management, file preview, scheduled tasks, and remote WebUI access via browser. It positions itself as a cross-platform alternative to Anthropic’s Claude Cowork, which is macOS-only.

Features:

- Supports Claude Code, Gemini CLI, Codex, Qwen Code, Goose CLI, and Augment Code from a single interface.

- All conversations and files stored locally in SQLite; data does not leave the device.

- Remote WebUI access via LAN or cross-network for access from phone or tablet.

- Scheduled task automation with natural language task specification.

- Preview panel supports PDF, Word, Excel, PPT, Markdown, HTML, code, and images.

Deployment & Requirements:

- macOS 10.15+, Windows 10+, or Linux (Ubuntu 18.04+/Debian 10+/Fedora 32+).

- 4GB RAM recommended; 500MB storage minimum.

- Requires at least one supported CLI AI tool or API key.

- Available via Homebrew (

brew install aionui) on macOS.

Safety & Permission Model: AionUi inherits the permission model of the underlying CLI tool it wraps. Its own data handling keeps conversations in a local SQLite database with no cloud upload. Remote WebUI access can be secured with QR code login or account password. The tool does not add independent sandboxing for agent execution.

Pros:

- Local data storage with no cloud dependency for conversation data.

- Cross-platform support including Windows, where some competitors are macOS-only.

- Broad model support reduces dependency on any single AI provider.

Cons:

- Agent execution safety depends entirely on the underlying CLI tool’s permission model.

- No container isolation for agent commands.

- Image generation features require additional API keys with external data transfer.

Repository: https://github.com/iOfficeAI/AionUi

What Makes a Good OpenClaw Alternative?

Choosing an OpenClaw alternative means balancing what you need it to do against how much you trust it with system access. Look for these features when comparing options:

Strict isolation. Application-level permission checks can be bypassed. The projects that hold up under scrutiny run agent commands inside Docker containers or OS-level sandboxes, so even a compromised or misbehaving agent cannot reach the host filesystem.

Auditable code. OpenClaw’s codebase has grown to a scale where most users cannot realistically verify what it does. A smaller alternative lets you read the source before handing it shell access to your machine. Several projects in this list have codebases under 5,000 lines.

Low resource footprint. OpenClaw’s Node.js runtime is not suitable for ARM single-board computers or VPS instances under 1GB RAM. The best alternatives here run on hardware that costs less than ten dollars a month.

Explicit permission surfaces. The default-allow pattern is what got OpenClaw into trouble. Look for tools that require you to grant access to specific directories, channels, or tool categories before the agent can use them.

Local model support. Running the inference model on the same machine as the agent keeps all data on-premises. Several alternatives here support Ollama and LM Studio endpoints out of the box.

Best OpenClaw Alternatives by Use Case

Best for security and local-first usage: nullclaw and ZeroClaw are the strongest choices. nullclaw encrypts API keys with ChaCha20-Poly1305, auto-detects the best available sandbox backend, and scopes filesystem access to a defined workspace with symlink escape detection. ZeroClaw offers deny-by-default channel policies, optional Docker sandboxing for shell execution, and a pairing-protected gateway that binds to localhost by default.

Best for strict permission control: nanoclaw and ZeroClaw both use OS-level container isolation rather than application-level checks. nanoclaw is simpler to audit; ZeroClaw offers more configuration options and broader platform support.

Best for developers: nanobot provides a clean Python codebase built for research and customization, with straightforward local model integration and an active contributor roadmap.

Best for multi-agent workflows: TinyClaw runs multiple specialized agents in parallel, each with an isolated workspace and conversation history. Messages route to specific agents using @agent_id syntax across Discord, WhatsApp, and Telegram.

Best for long-term personal workflows: Hermes Agent is the strongest choice for recurring tasks where performance improvement across sessions matters. Its skill system accumulates task-specific knowledge over weeks of use, producing faster and more accurate results on repeated task types.

Best GUI experience: AionUi wraps Claude Code, Gemini CLI, Codex, and other CLI tools in a unified desktop interface with all conversation data stored locally in SQLite.

Why Safety Matters for AI Agents Like OpenClaw

AI agents with high system permissions present real-world risks that differ from traditional software. Recent research and security advisories have highlighted several concerns:

- Remote Code Execution Vulnerabilities: In early 2026, a critical flaw (CVE-2026-25253) allowed attackers to hijack OpenClaw instances by tricking users into visiting malicious websites. The attack exploited token leakage to gain full control of the gateway host.

- Exposed Instances: Security scans identified over 40,000 OpenClaw deployments exposed to the public internet. Many of these ran with default configurations, binding to all network interfaces without authentication.

- Malicious Skills: The ClawHub skills marketplace has been a vector for malware. Analysis of approximately 3,000 skills found a 10.8 percent infection rate, with plugins designed to steal cryptocurrency wallets and cloud service tokens. Another report found 7.1 percent of 3,984 skills contained severe security defects .

- Indirect Prompt Injection: Attackers can embed hidden instructions in web pages that AI agents read. When an agent processes such content, it may unknowingly execute commands that compromise the system.

- Plaintext Credential Storage: OpenClaw stores API keys and tokens in JSON files without encryption. Infostealer malware can harvest these credentials, giving attackers persistent access to connected services.

These issues have led developers and security-conscious users to seek alternatives with stronger isolation, clearer permission models, and more predictable execution behavior.

Final Thoughts

OpenClaw proved that people want AI agents that live in their messaging apps and actually get things done. Its rapid growth shows that agentic AI has moved from a research concept to a practical tool.

The security problems that showed up don’t mean we should give up on AI agents. They mean developers need to build safety in from the start, not add it later. The alternatives covered here each address safety through different means: ZeroClaw through Rust’s memory safety and container isolation, nanoclaw through minimal code and OS containers.

The projects here each solve the safety problem differently. ZeroClaw reaches for Rust’s memory safety and container isolation. nanoclaw bets on a codebase small enough to audit before trusting. nullclaw compresses the entire runtime to a 678 KB binary with multi-layer sandbox auto-detection. Hermes Agent takes a different angle entirely, focusing on what the agent learns across sessions rather than just what it can do in a single one.

OpenClaw remains a valid option for users who need its skill ecosystem and platform support. Run the latest version, enable Docker sandboxing, and keep the gateway off the public internet.

The alternatives here are not just OpenClaw without the risk. Each reflects a different judgment about what a personal agent should be: a minimal, auditable tool; an ultra-efficient edge runtime; or a system that gets better the longer it runs.

FAQs

Q: Is OpenClaw safe to use?

A: OpenClaw can be used safely with proper configuration, but default settings have led to widespread exposures. Security researchers have identified multiple critical vulnerabilities in early 2026, though patches were released quickly. Users should run the latest version, avoid exposing the gateway to the internet, use container sandboxing, and carefully vet any installed skills.

Q: Is OpenClaw fully open source?

A: Yes, OpenClaw is open source and available on GitHub. It recently transitioned to the OpenClaw Foundation with support from OpenAI.

Q: Can OpenClaw alternatives run locally?

A: All seven alternatives listed run locally or can be self-hosted. ZeroClaw and nanobot are designed specifically for local deployment with minimal dependencies. Hermes Agent and AionUi also store conversation data locally by default.

Q: Do safer AI agents limit functionality?

A: Safety features can restrict some capabilities. Containerized agents may have limited filesystem access. Containerized agents may have limited filesystem access by default. However, for many automation use cases, such as file organization, news summarization, and scheduled tasks, these limitations don’t reduce practical utility.

Q: Are open-source AI agents suitable for production use?

A: Several of these tools are used in production environments. ZeroClaw’s trait-based architecture and memory system are designed for reliability. TinyClaw’s file-based queue prevents race conditions in multi-agent setups. Hermes Agent’s approval flows provide explicit human sign-off on sensitive actions before the agent proceeds.

Q: What is the difference between permission control and sandboxing?

A: Permission control uses software checks to decide whether an action is allowed. Sandboxing uses OS-level isolation (containers, virtual machines) to limit what an action can affect, even if allowed.

Related Resources:

Changelog:

Apr 11, 2026

- Replaced OpenWork with Hermes Agent.