nanochat is a full-stack, open-source implementation of a ChatGPT-like large language model that can be trained from scratch.

Created by Andrej Karpathy, the AI researcher behind nanoGPT and former Tesla AI director, this project provides you with a comprehensive pipeline that encompasses tokenization, pretraining, fine-tuning, evaluation, inference, and web serving. The entire codebase consists of around 8,000 lines of mostly Python code with some Rust for tokenizer training.

You can train a conversational AI model for around $100 in just 4 hours using a single 8XH100 GPU node (costing roughly $24 per hour). The resulting model weighs in at approximately 561M parameters, small enough to run on modest hardware but capable of holding basic conversations, answering questions, and even generating creative content like stories and poems.

Features

- Complete Training Pipeline: Covers every step from raw data to a working chatbot, including tokenization using a custom Rust-based tokenizer, pretraining on educational web content, midtraining for task-specific improvements, supervised finetuning, and optional reinforcement learning.

- Minimal Dependencies: The project maintains a clean, dependency-lite codebase with approximately 2,004 lines in the uv.lock file, making it easy to understand, modify, and troubleshoot without navigating complex framework abstractions.

- Scalable Architecture: While the default configuration trains a 561M parameter model, you can scale up to larger models like the depth-26 variant (~$300, 12 hours) that slightly outperforms GPT-2, or even a $1,000 tier model with better performance on standard benchmarks.

- Built-in Evaluation Suite: Automatically runs the model through multiple benchmarks including ARC-Challenge, ARC-Easy, GSM8K, HumanEval, and MMLU, generating a comprehensive report card that tracks performance across training stages.

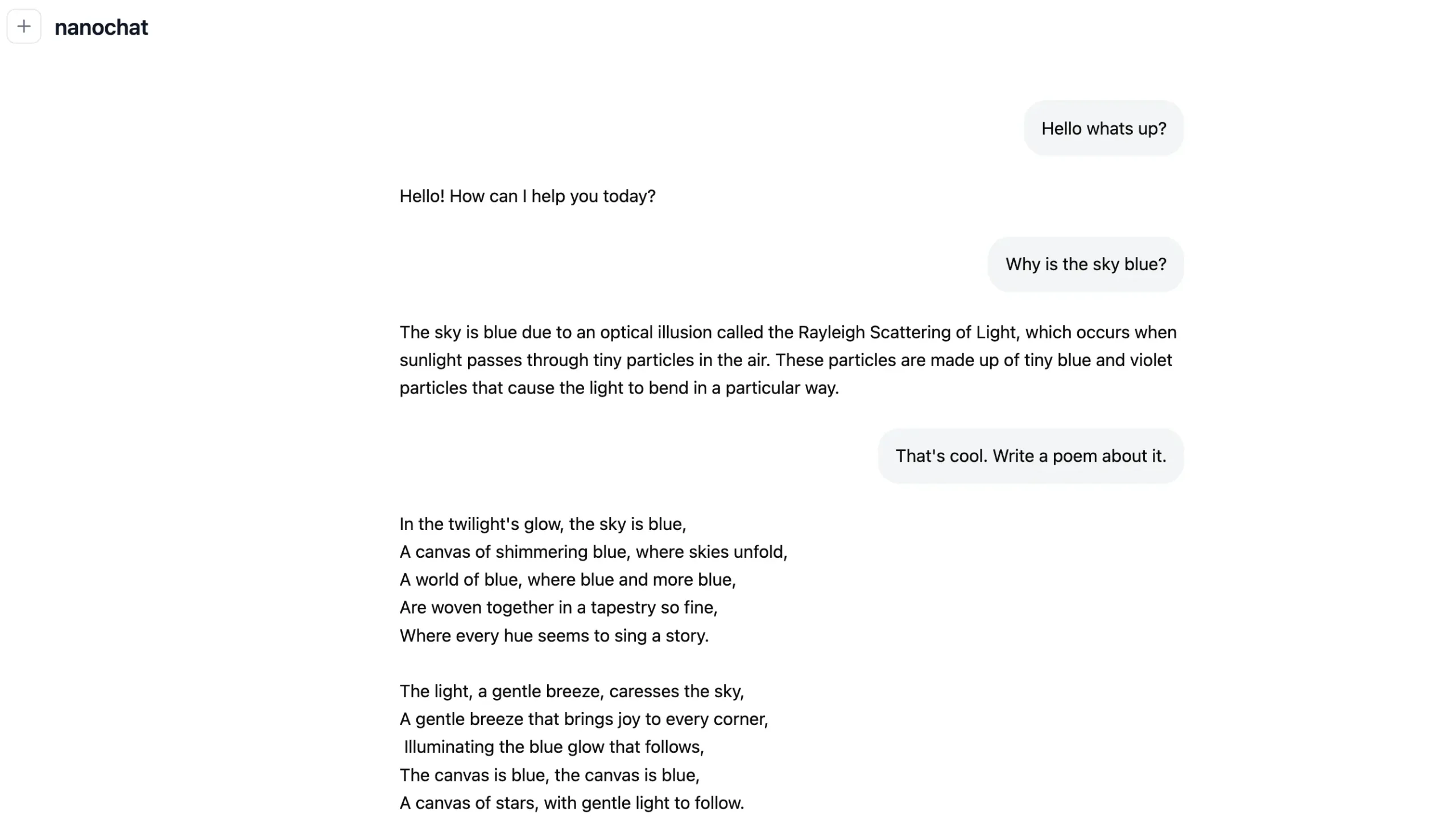

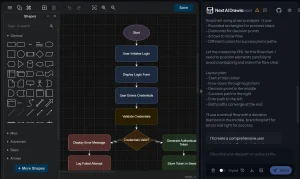

- ChatGPT-Style Web Interface: Includes a clean, functional web UI built with vanilla HTML and JavaScript that lets you interact with your trained model just like using ChatGPT, making it easy to test and demonstrate your model’s capabilities.

- Single-Script Execution: The speedrun.sh script automates the entire process from start to finish, handling data downloading, model training across multiple stages, evaluation, and deployment without manual intervention.

- Flexible Hardware Support: Runs on 8XH100 nodes for fastest training, works fine on 8XA100 nodes (slightly slower), and can even run on a single GPU by automatically switching to gradient accumulation (though it takes 8 times longer).

- Educational Focus: Designed as the capstone project for Karpathy’s LLM101n course at Eureka Labs, the code prioritizes readability and learning over exhaustive configurability, making it perfect for understanding how modern LLMs actually work.

Use Cases

AI Education and Research

Students and researchers can explore the complete lifecycle of LLM development without needing million-dollar budgets. The clean codebase serves as a learning tool for understanding transformer architectures, training dynamics, and finetuning strategies. Universities can incorporate nanochat into coursework for hands-on machine learning education.

Rapid Prototyping

Developers testing new training techniques, data preprocessing methods, or model architectures can iterate quickly with nanochat’s fast training cycles. The 4-hour base training time means you can test multiple hypotheses in a single day without waiting weeks for results.

Custom Domain Models

Organizations wanting specialized conversational AI for niche topics can train models on their own curated datasets. Since the entire pipeline is transparent and hackable, you can swap in domain-specific data, adjust training objectives, and finetune for particular use cases like medical Q&A, legal assistance, or technical support.

Hardware Benchmarking

Cloud providers and GPU manufacturers can use nanochat as a standardized benchmark for measuring training performance across different hardware configurations. The predictable 4-hour runtime provides a consistent metric for comparing compute efficiency.

Open Research Replication

Researchers can verify claims about small-scale LLM capabilities by training models themselves rather than relying on proprietary systems. The reproducible pipeline ensures that results can be independently validated and built upon by the community.

How to Use It

1. Rent an 8XH100 GPU node from a cloud provider like Lambda, which costs around $24 per hour. Make sure the machine has enough storage for the training data (approximately 24GB) and sufficient memory for the model checkpoints.

2. Clone the nanochat repository from GitHub and navigate into the project directory. The repository includes all necessary code and scripts, so you won’t need to install many external dependencies beyond what’s in the lock file.

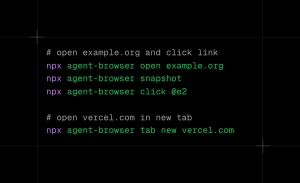

3. Launch the training process using the speedrun.sh script. For long-running sessions, open a new screen session to keep the process running even if you disconnect.

screen -L -Logfile speedrun.log -S speedrun bash speedrun.sh

You can detach from the screen with Ctrl-a d and check progress by tailing the log file.

3. The script downloads training data (approximately 450 shards for the base model), trains the tokenizer, runs base pretraining for about 3.5 hours, performs midtraining on curated datasets, executes supervised finetuning on task-specific examples, and generates evaluation metrics across multiple benchmarks.

4. Once training completes (roughly 4 hours later), activate the uv virtual environment with source .venv/bin/activate and start the web server using python -m scripts.chat_web. The server launches on port 8000 by default.

5. Access the web interface by opening your browser and navigating to the public IP of your GPU node followed by the port number. For example, if your Lambda node has IP 209.20.xxx.xxx, you’d visit http://209.20.xxx.xxx:8000/. The web UI mimics ChatGPT’s layout and lets you type messages and receive responses from your newly trained model.

6. Review the automatically generated report.md file in the project directory to see your model’s performance metrics. This report card shows scores across different evaluation tasks at each training stage, helping you understand what your model can and cannot do well.

Pros

- Affordability: The ability to train a conversational AI for about $100 is a huge deal. It lowers the barrier to entry for developers and researchers.

- Educational Value: The clean, minimal codebase is perfect for learning. It’s not a black box; you can actually read the code and understand how it works.

- Completeness: It’s a full-stack solution. You’re not just training a model; you’re building the entire system around it.

- Open Source: The MIT license means you have the freedom to use and modify it for your own projects.

Cons

- Basic Performance: Let’s be real: the $100 model is not going to compete with GPT-4. Karpathy himself describes its capabilities as being like a kindergartener’s. It can hold a conversation, but it’s prone to hallucinations and struggles with complex reasoning.

- Hardware Requirements: While you can run it on a single GPU, it’s significantly slower. To get the intended experience, you really need access to a powerful (and often costly) multi-GPU setup.

Related Resources

- nanoGPT: Karpathy’s earlier project focusing specifically on GPT pretraining, which served as the foundation for nanochat’s training approach and philosophy.

- modded-nanoGPT: A gamified version of nanoGPT with leaderboards and clear metrics that inspired many of nanochat’s optimization ideas and evaluation strategies.

- FineWeb-Edu: The educational web content dataset from HuggingFace that nanochat uses for pretraining, providing high-quality filtered text data.

- SmolTalk Dataset: The conversational dataset from HuggingFace used during midtraining and supervised finetuning to teach the model dialogue patterns.

- LLM101n Course: Karpathy’s educational course on building language models from scratch, for which nanochat serves as the practical capstone project.

FAQs

Q: Can I train nanochat on my personal computer with a single GPU?

A: Yes, but with caveats. The code will automatically switch to gradient accumulation if you run it without torchrun, producing nearly identical results. However, training will take about 8 times longer (roughly 32 hours instead of 4), and you’ll need at least 80GB of VRAM to run with default settings. If your GPU has less memory, reduce the device_batch_size parameter until things fit. You can go from 32 down to 16, 8, 4, 2, or even 1.

Q: How does the $100 model compare to GPT-2 or GPT-3?

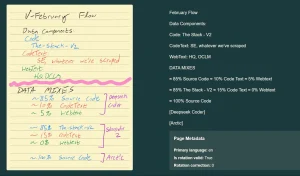

A: The base $100 model (around 561M parameters) performs below GPT-2 on most benchmarks. It scores roughly 22% on CORE metrics and gets into the 20-30% range on multiple-choice tests. If you scale up to the $300 tier (depth-26 model, 12 hours of training), you’ll slightly outperform GPT-2. The $1,000 tier model achieves scores in the 40s on MMLU and 70s on ARC-Easy, approaching GPT-3 Small (125M) performance levels but still far below modern commercial models.

Q: What can I actually do with a model trained for $100?

A: You can have basic conversations, ask it to write simple stories or poems, and pose straightforward factual questions. The model will attempt to answer but often hallucinates facts and struggles with complex reasoning. Think of it as talking to someone with limited knowledge who sometimes makes things up confidently. It’s better suited for learning how LLMs work, experimenting with training techniques, or as a starting point for further development than as a production assistant.

Q: Do I need to know Rust to use or modify nanochat?

A: No. The Rust component only handles tokenizer training using a BPE implementation. The tokenizer runs once at the start of the pipeline and produces a vocabulary file that the Python code uses for the rest of the process. You can work with and modify 95% of nanochat knowing only Python and PyTorch. The Rust part is isolated and doesn’t require changes for most experiments.

Q: How much does it cost to run inference after training?

A: Running inference is dramatically cheaper than training. The 561M parameter model can run on consumer hardware including older GPUs, MacBooks, or even Raspberry Pi devices. You can serve it from a cheap cloud instance for pennies per hour or run it locally for free. One person managed to run it on CPU on macOS using a modified script, though it was slow.

Q: Can I train nanochat on my own custom dataset instead of FineWeb-Edu?

A: Absolutely. The codebase is designed to be hackable. You’ll need to modify the data loading section in the training scripts to point to your own dataset. Make sure your data is properly formatted (the code expects text files split into shards) and that you have enough data for your target model size. A good rule of thumb is 20 tokens per parameter, so a 561M parameter model needs about 11 billion tokens.