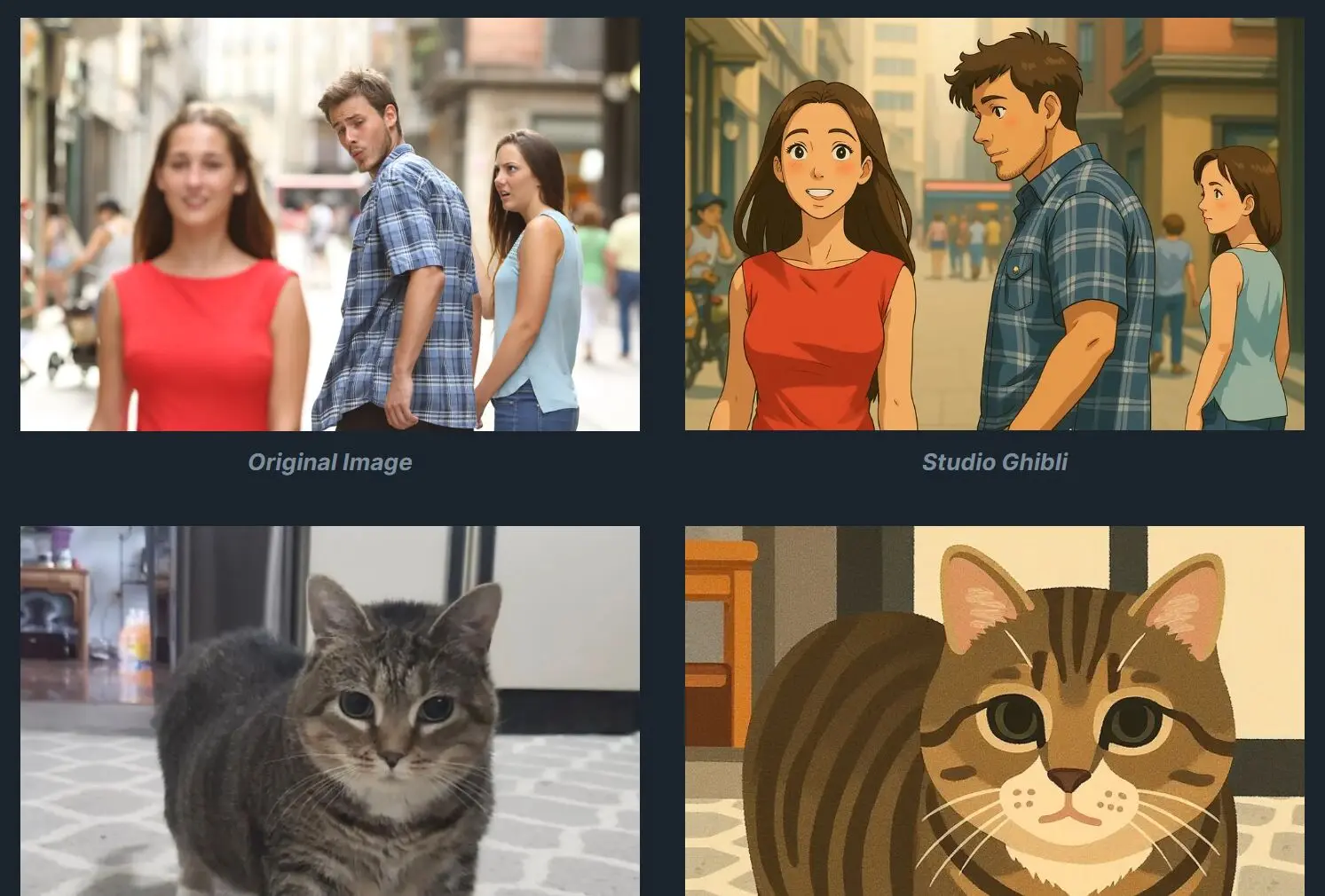

OminiControl Art is a free AI style transfer tool that brings the artistic style capabilities of GPT-4o image generation (particularly Studio Ghibli, which seems to be everywhere right now) to the open-source community through the FLUX.1 model.

It allows you to transform ordinary images into stylized artwork reminiscent of Studio Ghibli, Irasutoya illustrations, The Simpsons, or Snoopy. Based on the OminiControl framework.

The Studio Ghibli style has become particularly popular since GPT-4o’s image generation capabilities were released. This tool can serves as a free alternative for those who want similar results without subscription costs.

Examples

Try It Out

How To Use OminiControl Art

1. Go to the OminiControl Art Hugging Face Space.

2. Find the image upload section and drop your file or browse to select it.

3. Select one of the available artistic styles:

- Snoopy

- Studio Ghibli

- Irasutoya Illustration

- The Simpsons

4. Adjust Settings (Optional):

- Choose between ‘High Quality’ (better results, ~20s) or ‘Fast’ (quicker preview).

- Select an ‘Image Ratio’ if you don’t want the tool to guess.

- Toggle ‘Use Random Seed’ if you want reproducibility (off) or variation (on).

- Tweak ‘Image Guidance’ and ‘Steps’ if the initial result isn’t quite right. Starting with defaults is usually fine.

5. Click the ‘Generate Image’ button. Wait for it to process (around 20 seconds on HQ). Your stylized image will be ready for download.

Pros

- Completely Free: No cost to use the tool.

- Very Easy: Dead simple web interface. No setup required.

- Specific Styles: Delivers on the promised artistic styles, especially the popular Ghibli one.

- Fast Enough: ~20 seconds for high quality is reasonable for a free web tool.

- Lightweight Tech: Built on the efficient OminiControl/FLUX.1 foundation.

- Open Source Roots: Based on open research and models.

Cons

- Limited Style Choice: Only four styles available right now. If you need something else, you’re out of luck.

- Web-Based Limitations: You need to upload images, which might be a concern for sensitive content. Performance depends on Hugging Face server load.

- Less Control: You can’t fine-tune the styles or add your own like you could with local models (e.g., Stable Diffusion + ControlNet/LoRAs).

- Style Consistency Varies: Results depend heavily on the input image. Complex scenes might not translate as well as simpler ones.

Related Resources

- OminiControl: For those interested in the underlying framework’s code.

- FLUX.1: If you want to understand the base Diffusion Transformer model.

FAQs

Q: What’s the main difference between ‘High Quality’ and ‘Fast’ modes?

A: ‘High Quality’ uses more computational steps to generate the image, leading to potentially better detail, texture, and style adherence, but takes longer (~20 seconds). ‘Fast’ uses fewer steps for quicker generation, potentially sacrificing some finer details.

Q: Can I add my own artistic styles to this tool?

A: Not directly within the public Hugging Face Space. Since the underlying OminiControl framework is open source, someone with the technical skills could potentially train controls for new styles and modify the code, but that’s beyond the scope of just using the online tool.

Q: What is FLUX.1?

A: FLUX.1 is a type of Diffusion Transformer model. Transformers (like those used in large language models) are being adapted for image generation, and FLUX.1 is one of the newer architectures in this area. OminiControl is specifically designed to work with models like it.

Q: Does it work well on any image?

A: Results can vary quite a bit. Simpler images with clear subjects tend to work better. Complex scenes, very detailed textures, or abstract inputs might produce less predictable or less coherent results depending on the style chosen. It’s worth trying a few different inputs to see what works best.