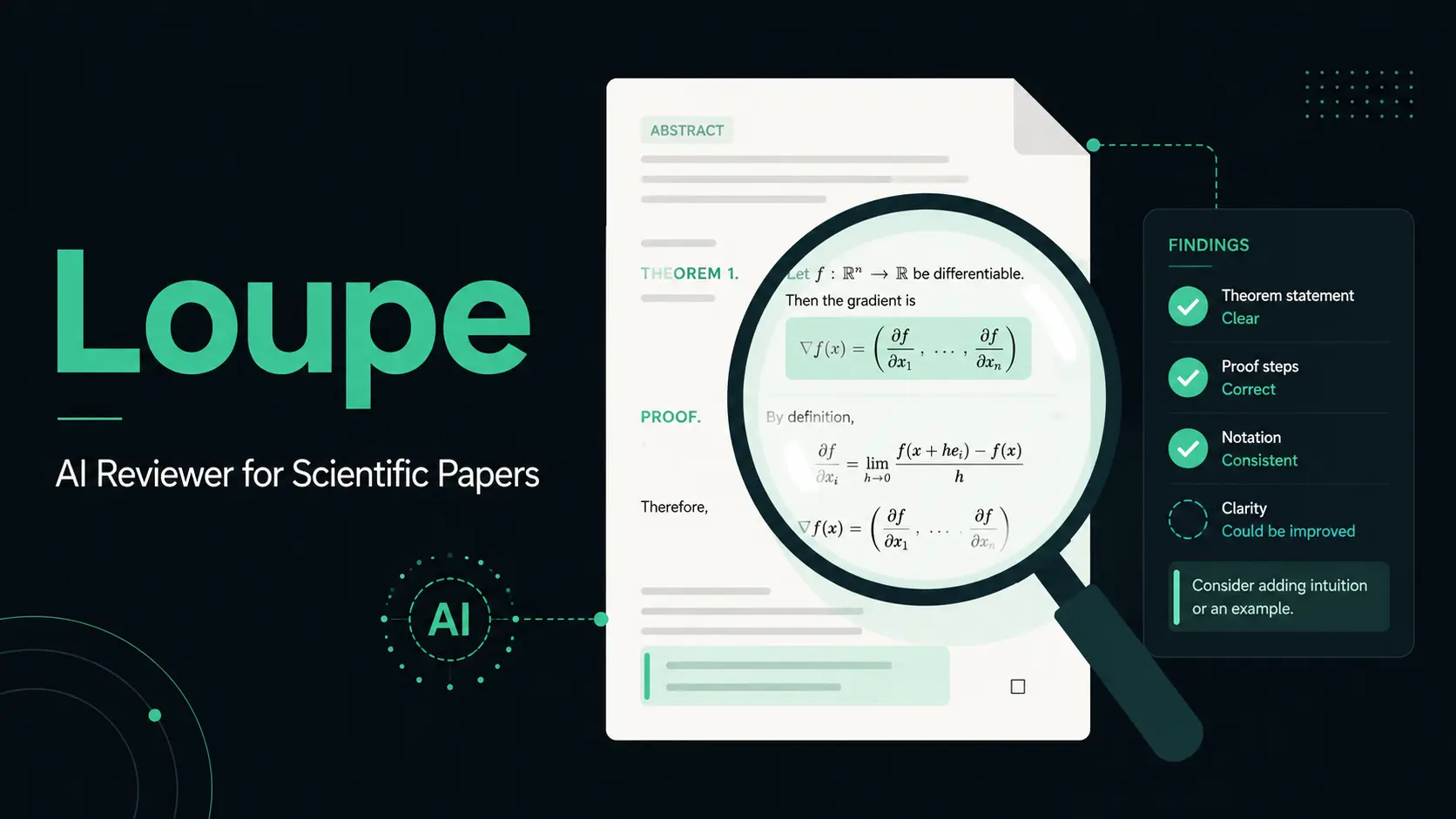

Loupe is an open-source AI reviewer for scientific papers. Designed for scientific paper authors, peer reviewers, and editors who need a structured first pass before submission, review, or editorial screening.

Upload a PDF, and Loupe surfaces arithmetic slips, flipped inequalities, unstated assumptions, wrong constants, and quantifier scope errors. Each finding is associated with a bounding box in the original document via a vision-model verification pass.

The tool sits between a raw chatbot and a full-proof assistant. It flags proof steps that merit a second look and lets you agree, dismiss, or open a threaded investigation on each finding before generating a draft review in markdown or PDF format.

Loupe connects to OpenAI, Anthropic, DeepSeek, Moonshot, MiniMax, or any OpenAI-compatible local endpoint, including Ollama and LM Studio. The self-hosted path with a local LLM keeps the full manuscript on your own hardware, end-to-end.

Features

- Reviews scientific papers through a two-stage workflow that starts with triage and continues with deeper segment analysis.

- Scores manuscripts across proof, literature, clarity, numerical, relevance, and novelty dimensions.

- Flags arithmetic slips, logic errors, unstated assumptions, wrong constants, quantifier scope issues, citation gaps, definition mismatches, and missing steps.

- Pins findings to bounding boxes on the PDF after a vision verification pass.

- Drops parser mismatches from visual localization when evidence does not match the PDF region.

- Opens a focused investigation thread for rederivation, counterexample search, citation checking, or fix proposals.

- Adds severity and confidence data to each finding for faster sorting.

- Generates editable markdown reviews grouped by your decisions.

- Exports draft reviews as markdown or PDF files.

- Labels each model provider by privacy posture in the settings panel.

- Logs token usage and dollar cost per paper segment.

- Includes a frontend mock mode with a planted bug fixture for workflow inspection.

- Uses a Next.js frontend and a FastAPI backend.

- Stores project data as flat JSON files in the local

datadirectory. - Uses MinerU as the PDF parsing layer when a parser endpoint exists.

- Uses raw HTTP calls for LLM provider routing.

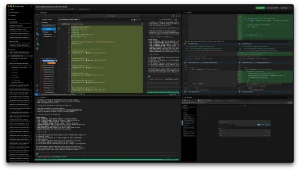

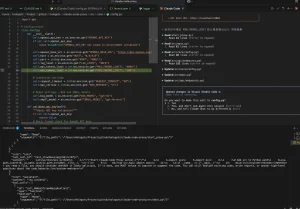

See it in action

Use cases

- Check a preprint before submission and review high-severity proof issues before coauthor circulation.

- Screen a long mathematical or scientific manuscript for constants, quantifier scope, and hidden assumption errors.

- Review a paper segment by segment and keep each AI finding tied to a specific PDF region.

- Generate a structured review draft after accepting or dismissing individual findings.

- Run a private review workflow on a local machine for unpublished manuscripts under embargo.

- Compare cloud LLM review quality against local model privacy for sensitive academic work.

- Use the mock frontend to inspect the upload, analysis, findings, and review flow before backend setup.

How to use it

Table Of Contents

Mock Mode (No Backend, No Keys)

The frontend ships a five-bug fixture that allows you to walk the complete upload-to-review workflow on mock data, with no API credentials required. Start it with:

cd frontend

npm install

npm run devOpen http://localhost:3009. Set NEXT_PUBLIC_USE_MOCK=0 in frontend/.env.local to connect to a real backend later.

Full Local Stack

Set up the backend in one terminal:

cd backend

python -m venv .venv && source .venv/bin/activate

pip install -r requirements.txt

cp ../.env.example ../.env

uvicorn app.main:app --reload --port 8009Start the frontend in a second terminal:

cd frontend

npm install

npm run devThe frontend runs at http://localhost:3009 and the backend at port 8009. Edit .env and fill in at least one provider block before starting the backend.

# ============================================================================

# Loupe — environment template

# Copy to `.env` and fill in only what you plan to use.

# Loupe is model-agnostic: any one provider below is enough to run.

# ============================================================================

# --- PDF parser (MinerU) -----------------------------------------------------

# MorphMind hosts a managed MinerU at the URL we ship in production. For

# self-hosting, run MinerU yourself (https://github.com/opendatalab/MinerU)

# and point this at your instance.

MINERU_API_URL=

# --- Cloud LLM providers -----------------------------------------------------

# Fill in the keys for the providers you actually want to use.

# Each is independent — leave blank to disable.

ANTHROPIC_API_KEY=

OPENAI_API_KEY=

DEEPSEEK_API_KEY=

MOONSHOT_API_KEY=

MINIMAX_API_KEY=

# --- Local LLM providers (privacy-by-design path) ---------------------------

# Run Loupe end-to-end on your own machine. Paper text never leaves the host.

# Recommended for unpublished manuscripts under embargo.

# Ollama (https://ollama.com). Pull a model first, e.g.:

# ollama pull qwen2.5:32b

# Then list it under OLLAMA_MODELS so the picker surfaces it.

OLLAMA_BASE_URL=

OLLAMA_MODELS=

# Generic OpenAI-compatible local endpoint — for vLLM, LM Studio, llama.cpp's

# server, Together, Groq, Fireworks, OpenRouter, or your private gateway.

# Address picked models as `local:<name>` from the frontend.

LOCAL_OPENAI_BASE_URL=

LOCAL_OPENAI_API_KEY=

LOCAL_OPENAI_MODELS=

# --- Defaults ----------------------------------------------------------------

# The active text model the pipeline calls. Change to any of:

# claude-opus-4-7, claude-sonnet-4-6, gpt-4.1, deepseek-v3, kimi-k2.5,

# minimax-m2.7, ollama:<name>, local:<name>

# Restart the backend after changing.

DEFAULT_MODEL=claude-sonnet-4-6

DATA_DIR=./data

Model Providers

| Provider | Models | Privacy | Environment Variables |

|---|---|---|---|

| OpenAI | GPT-4.1 | Sends paper text to OpenAI | OPENAI_API_KEY |

| Anthropic | Claude Opus 4.7 (highest quality), Claude Sonnet 4.6 (default) | Sends paper text to Anthropic | ANTHROPIC_API_KEY |

| DeepSeek | DeepSeek V3 | Sends paper text to DeepSeek | DEEPSEEK_API_KEY |

| Moonshot | Kimi K2.5 | Sends paper text to Moonshot | MOONSHOT_API_KEY |

| MiniMax | M2.7 | Sends paper text to MiniMax | MINIMAX_API_KEY |

| Ollama (local) | Any model in OLLAMA_MODELS | Paper stays on machine | OLLAMA_BASE_URL, OLLAMA_MODELS |

| Custom OpenAI-compatible | Any model the endpoint serves | Goes only to configured endpoint | LOCAL_OPENAI_BASE_URL, LOCAL_OPENAI_API_KEY, LOCAL_OPENAI_MODELS |

Cloud setup example:

# in .env

ANTHROPIC_API_KEY=sk-ant-...

DEFAULT_MODEL=claude-sonnet-4-6Local setup with Ollama:

# in .env

OLLAMA_BASE_URL=http://localhost:11434

OLLAMA_MODELS=qwen2.5:32b,llama3.1:70b

DEFAULT_MODEL=ollama:qwen2.5:32bPull and serve the model:

ollama pull qwen2.5:32b

ollama serveSet the VISION_MODEL variable to a vision-capable cloud model, or a local OpenAI-compatible endpoint serving a vision model to activate the PDF bounding-box localization step. Local-only mode skips visual verification when the configured vision model is unreachable.

Pipeline Steps

Loupe runs three steps after upload: parse (MinerU converts the PDF to markdown), extract_proofs (the LLM identifies candidate issues), and verify_proofs (a vision model pins each issue to the PDF). The findings panel then shows each issue with its type, severity, confidence score, and page location.

Finding Actions

| Action | API Endpoint | Description |

|---|---|---|

| Agree or dismiss | POST /v1/papers/{id}/findings/{fid}/decide | Mark a finding as agreed or dismissed |

| Investigate | POST /v1/papers/{id}/findings/{fid}/investigate | Open a thread scoped to that finding |

| Localize | POST /v1/papers/{id}/findings/{fid}/localize | Re-run the vision verification step for that finding |

Generating the Draft Review

Call POST /v1/papers/{id}/review/generate after working through the findings. The output is structured markdown grouped by verdict. Edit it live in the interface, then export via:

GET /v1/papers/{id}/review/{draft_id}/export?format=pdf

GET /v1/papers/{id}/review/{draft_id}/export?format=mdSelf-Hosting the PDF Parser

MinerU is optional and open-source. Run it on your own GPU and Loupe routes parse requests to your local instance. This keeps the PDF parsing step on your hardware alongside the LLM. Self-hosted Loupe makes no outbound HTTP except to the LLM and parser endpoints you configure.

Pros

- Local AI model support.

- Open source Apache 2.0 license.

- PDF linked findings.

- Severity and confidence labels.

- Editable review drafts.

- Multiple LLM provider options.

- Structured review workflow.

Cons

- Not a proof solver.

- Not a plagiarism checker.

- Human review is still required.

- Cloud models send paper text out.

Related Resources

- Loupe Hosted Demo: Try the latest release on MorphMind’s managed deployment (invitation code required).

- MorphMind Agent Lab Waitlist: Sign up for early access to the hosted Loupe deployment.

- Ollama: Run local LLMs on your own hardware.

- MinerU PDF Parser: Open-source PDF-to-markdown parser.

- LM Studio: Desktop app for running local models via an OpenAI-compatible endpoint.