This is an open-source Python script created for transcribing YouTube videos and playlists into text.

It integrates technologies like WhisperModel for transcription, SpaCy for natural language processing, and CUDA for GPU acceleration, aimed at efficiently processing video content. Can handle individual videos and entire playlists, outputting accurate transcripts and metadata.

How it works:

The script starts by determining whether to process a single video or a playlist. It sets up directories for storing audio, transcripts, and metadata. It adds CUDA Toolkit to utilize GPUs and configures transcription workers based on CPU cores.

It downloads the audio for each video, ensures unique names, and passes to WhisperModel for transcription, using GPUs if available. Transcripts are split into sentences with SpaCy or Regex. Metadata like timestamps and confidence scores are generated.

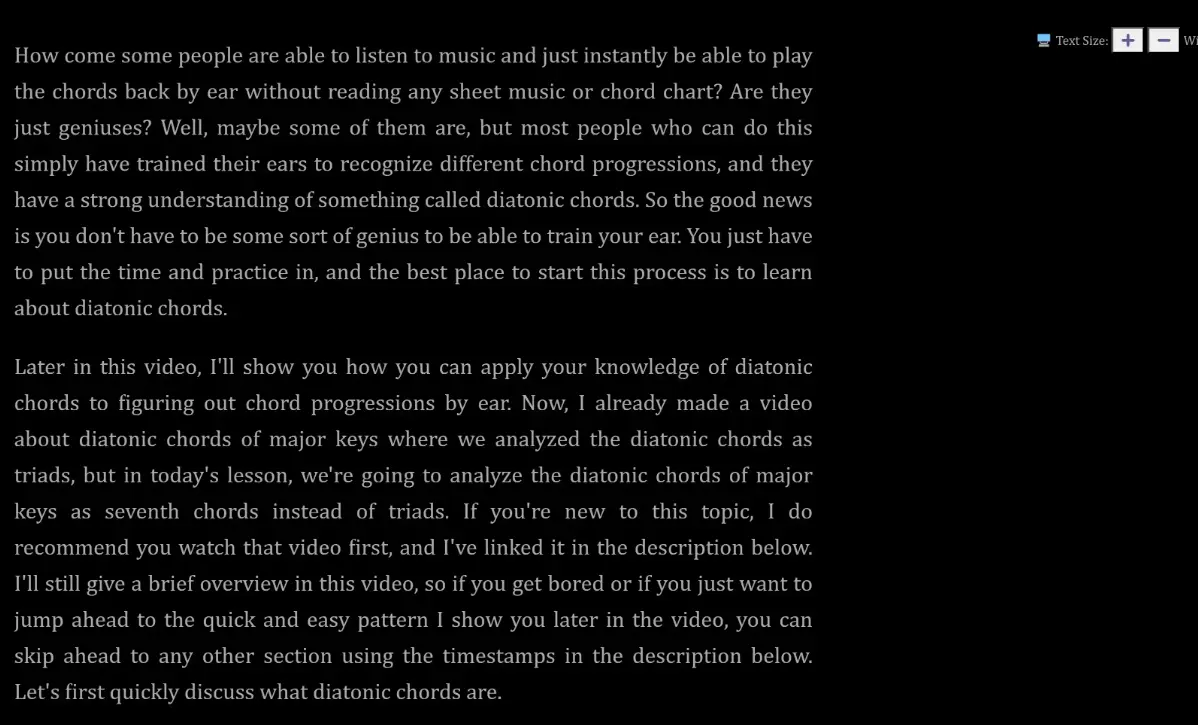

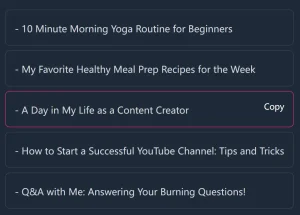

Transcripts are saved as plain text, CSV, JSON, and HTML for readability. A special HTML file transcript_reader.html offers “Reader Mode” to customize font, text size, width, and toggle dark mode.

How to use it:

1. Set Up Python Environment:

python3 -m venv venv source venv/bin/activate

2. Upgrade pip and Install Wheel:

python3 -m pip install --upgrade pip python3 -m pip install wheel

3. Install dependencies from requirements.txt.

pip install -r requirements.txt

4. Run the script with Python.

python3 bulk_transcribe_youtube_videos_from_playlist.py

5. Start transcribing YouTube Videos or Playlists.

6. Available parameters:

# Set this to 1 to process a single video, 0 for a playlist convert_single_video = 1 # The script either uses SpaCy or a custom regex-based method for sentence splitting. use_spacy_for_sentence_splitting = 1 # Limit the number of simultaneous downloads max_simultaneous_youtube_downloads = 4 # Set this to 1 to disable CUDA even if it is available disable_cuda_override = 1