As we journey through each day, our brains masterfully interpret myriad sensory cues to weave a meaningful tapestry of our surroundings. The intricate workings of this continuous mechanism remain a vast scientific enigma.

Today, a breakthrough from Meta shines a light on this profound mystery. Thanks to magnetoencephalography (MEG), a revolutionary non-invasive technique capturing thousands of brain activity snapshots every second, Meta reveals an AI that has mastered the art of decoding the ever-changing visual representations in our brains. This feat achieves an unparalleled temporal precision.

Here are the highlights:

- The AI can operate in real-time, translating brain activity to reconstruct the images the brain is processing moment-by-moment.

- Such a system holds the potential to peel back the layers of how our brains comprehend images, laying the groundwork for comprehending human intellect.

- In the bigger picture, this could pave the way for non-invasive brain-computer interfaces. Imagine helping individuals who’ve lost their speech due to brain injuries communicate again.

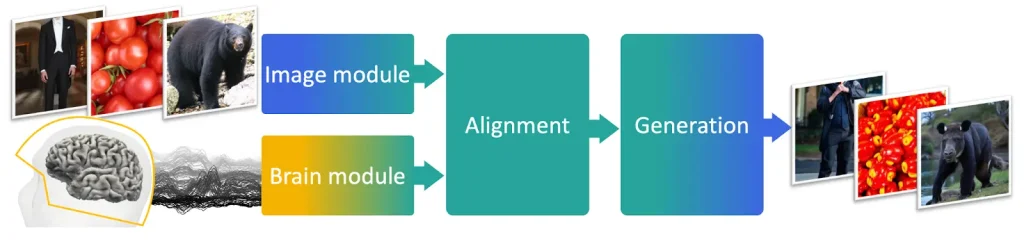

- The system’s backbone is a sophisticated architecture: an image encoder (translates the image), a brain encoder (maps MEG signals to image representations), and an image decoder (crafts an image based on brain signals).

- The training was done on a public dataset from Things, a global consortium of academic researchers.

A fascinating find from the study: AI systems developed through self-supervised learning, like DINOv2, align astoundingly well with human brain representations. It’s as if artificial and biological neurons “speak” a similar language when they see the same image.

Yet, while the achievements are monumental, it’s not flawless. The AI’s recreated images retain high-level attributes like object categories, but sometimes fumble on the details. The accuracy of images birthed from MEG isn’t on par with those from fMRI, which boasts slower yet more spatially precise insights.

In essence, with MEG, Meta has unveiled the capability to interpret, with millisecond accuracy, the complex visuals our brains conjure. This research milestone amplifies Meta’s enduring quest to fathom human intelligence, spotlighting the parallels and distinctions between our brains and modern machine learning. It’s a stride towards crafting AI that truly emulates human thought processes.

Dive deeper and read the paper.

Why is this crucial?

Such advancements underscore the confluence of neurology and AI, unraveling human cognitive processes. It’s not just about building smarter machines; it’s about reimagining therapeutic interventions and understanding our own intelligence.