TL;DR

| Model | Context Window | Max Output Tokens | Training Data |

|---|---|---|---|

| GPT-5.2 GPT-5.2-Pro | 400,000 | 128,000 | Aug 31, 2025 |

| GPT-5 | 400,000 | 128,000 | Sep 30, 2024 |

| GPT-5-Mini GPT-5-Nano | 400,000 | 128,000 | May 31, 2024 |

| GPT-4.1 | 1,047,576 | 32,768 | Jun 01, 2024 |

| o4-mini | 200,000 | 100,000 | Jun 01, 2024 |

| o3 | 200,000 | 100,000 | Jun 01, 2024 |

| o1 | 200,000 | 100,000 | Up to Oct 2023 |

| GPT-4o | 128,000 | 16,384 | Up to Oct 2023 |

| GPT-4o mini | 128,000 | 16,384 | Up to Oct 2023 |

When you build apps using OpenAI’s models, you’ll quickly run into token limits. Every model, from the GPT series to the newer reasoning models, has a maximum number of tokens it can handle in a single request.

This limit isn’t just a technical detail. It affects your app’s performance, its capabilities, and how much it costs to run.

In this article, I will walk you through what these limits are, why they exist, and how to work with them effectively. Let’s get started.

Table Of Contents

- What Are Tokens in OpenAI API

- Why OpenAI Models Have Token Limits

- Token Limit in GPT-5

- Token Limit in GPT-4.1

- Token Limit in o1, o3, and o4

- Token Limit in GPT-4

- Token Limit in GPT-3.5

- Embeddings Models

- Token Limt In Moderation Models

- Token Limt In GPT Base Models

- Key caveats

- OpenAI Model Token Limits and Context Windows

- Token Limits vs. Rate Limits

- How Token Limits Impact Your Applications

- Strategies for Managing Token Limits

- Working with Reasoning Model Token Consumption

- Best Practices for Token Optimization

- Future of Token Limits in OpenAI Models

- Measuring and Monitoring Token Usage

What Are Tokens in OpenAI API

Tokens are the basic units that language models process. Think of them as puzzle pieces that make up text. A token might be a whole word like “hello” or part of a word like “ing” in “running.”

OpenAI models break down your input text into these tokens before processing. The sentence “I love building AI apps” becomes roughly 5-6 tokens. Short words typically equal one token, while longer words might split into multiple tokens.

The exact count depends on the tokenizer used. Common words stay intact as single tokens. Rare words or names often get split. Punctuation marks usually become separate tokens too.

You can see how any text gets broken down into tokens using OpenAI’s free Tokenizer tool. This helps predict how many tokens your input will use.

Why OpenAI Models Have Token Limits

Token limits exist for practical reasons that keep the models fast and reliable.

Memory constraints play the biggest role. Transformer models need to track relationships between all tokens in your input. Processing 100,000 tokens requires significantly more memory than processing 1,000 tokens. The model must store attention patterns for every token pair.

Response time matters for user experience. More tokens mean longer processing times. A 50,000-token input might take minutes to process instead of seconds. OpenAI balances thoroughness with speed.

Computational costs scale with token count. Training and running these models costs millions of dollars. Token limits help control infrastructure expenses and keep the service accessible.

Model performance can degrade with extremely long inputs. While models can technically handle more tokens, they sometimes lose track of early context in very long conversations.

Current Token Limits Across OpenAI Models

Token Limit in GPT-5

| Model | Context Window | Max Output Tokens | Training Data |

|---|---|---|---|

| GPT-5.2 | 400,000 | 128,000 | Aug 31, 2025 |

| GPT-5.2-Pro | 400,000 | 128,000 | Aug 31, 2025 |

| GPT-5 | 400,000 | 128,000 | Sep 30, 2024 |

| GPT-5 Mini | 400,000 | 128,000 | May 31, 2024 |

| GPT-5 Nano | 400,000 | 128,000 | May 31, 2024 |

Token Limit in GPT-4.1

| Model | Context Window | Max Output Tokens | Training Data |

|---|---|---|---|

| GPT-4.1 | 1,047,576 | 32,768 | Jun 01, 2024 |

| GPT-4.1 Mini | 1,047,576 | 32,768 | Jun 01, 2024 |

| GPT-4.1 Nano | 1,047,576 | 32,768 | Jun 01, 2024 |

Token Limit in o1, o3, and o4

| Model | Context Window | Max Output Tokens | Training Data |

|---|---|---|---|

| o4-mini | 200,000 | 100,000 | Jun 01, 2024 |

| o3 | 200,000 | 100,000 | Jun 01, 2024 |

| o3-mini | 200,000 | 100,000 | Up to Oct 2023 |

| o1 | 200,000 | 100,000 | Up to Oct 2023 |

| o1-mini | 128,000 | 65,536 | Up to Oct 2023 |

| o1-pro | 200,000 | 100,000 | Up to Oct 2023 |

Token Limit in GPT-4

| Latest model | Context Window | Max Output Tokens | Training data |

|---|---|---|---|

| gpt-4.5 Preview | 128,000 | 16,384 | Up to Oct 2023 |

| gpt-4o gpt-4o-2024-11-20 gpt-4o-2024-08-06 | 128,000 | 16,384 | Up to Oct 2023 |

| gpt-4o-2024-05-13 | 128,000 | 4,096 | Up to Oct 2023 |

| chatgpt-4o-latest | 128,000 | 16,384 | Up to Oct 2023 |

| gpt-4o-mini | 128,000 | 16,384 | Up to Oct 2023 |

| gpt-4o-realtime-preview gpt-4o-realtime-preview-2024-12-17 gpt-4o-realtime-preview-2024-10-01 gpt-4o-mini-realtime-preview gpt-4o-mini-realtime-preview-2024-12-17 | 128,000 | 4,096 | Up to Dec 2023 |

| gpt-4o-audio-preview gpt-4o-audio-preview-2024-12-17 gpt-4o-audio-preview-2024-10-01 | 128,000 | 4,096 | Up to Dec 2023 |

| gpt-4-turbo | 128,000 | 4,096 | Up to Dec 2023 |

| gpt-4-turbo-2024-04-09 | 128,000 | 4,096 | Up to Dec 2023 |

| gpt-4-0125-preview | 128,000 | 4,096 | Up to Dec 2023 |

| gpt-4-turbo-preview | 128,000 | 4,096 | Up to Apr 2023 |

| gpt-4-1106-preview | 128,000 | 4,096 | Up to Apr 2023 |

| gpt-4 | 8,192 | 8,192 | Up to Sep 2021 |

| gpt-4-0613 | 8,192 | 8,192 | Up to Sep 2021 |

| gpt-4-0314 | 8,192 | 8,192 | Up to Sep 2021 |

Token Limit in GPT-3.5

| Latest model | Description | Context Window | Max Output Tokens | Training data |

|---|---|---|---|---|

| gpt-3.5-turbo-0125 | The latest GPT-3.5 Turbo model with higher accuracy at responding in requested formats and a fix for a bug which caused a text encoding issue for non-English language function calls. | 16,385 | 4,096 | Up to Sep 2021 |

| gpt-3.5-turbo-1106 | GPT-3.5 Turbo model with improved instruction following, JSON mode, reproducible outputs, parallel function calling, and more. | 16,385 | 4,096 | Up to Sep 2021 |

| gpt-3.5-turbo | Currently points to gpt-3.5-turbo-0125. | 4,096 | 4,096 | Up to Sep 2021 |

| gpt-3.5-turbo-instruct | Similar capabilities as GPT-3 era models. Compatible with legacy Completions endpoint and not Chat Completions. | 4,096 | 4,096 | Up to Sep 2021 |

Embeddings Models

| Model | Description | Output Dimension |

|---|---|---|

| text-embedding-3-large | Most capable embedding model for both english and non-english tasks. | 3,072 |

| text-embedding-3-small | Increased performance over 2nd generation ada embedding model | 1,536 |

| text-embedding-ada-002 | Most capable 2nd generation embedding model, replacing 16 first generation models. | 1,536 |

Token Limt In Moderation Models

| Model | Description | Max tokens |

|---|---|---|

| text-moderation-latest | Currently points to text-moderation-007. | 32,768 |

| text-moderation-stable | Currently points to text-moderation-007. | 32,768 |

| text-moderation-007 | Most capable moderation model across all categories. | 32,768 |

Token Limt In GPT Base Models

| Model | Description | Max tokens | Training Data |

|---|---|---|---|

| babbage-002 | Replacement for the GPT-3 ada and babbage base models. | 16,384 | Up to Sep 2021 |

| davinci-002 | Replacement for the GPT-3 curie and davinci base models. | 16,384 | Up to Sep 2021 |

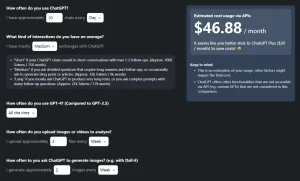

Key caveats

- UI limits (ChatGPT) sometimes differ from API limits.

- “Output tokens” often include hidden reasoning tokens for reasoning-enabled models. Track both input and output budget when estimating costs.

- Cloud vendors or preview deployments may expose different limits than OpenAI’s public API. Check vendor docs.

OpenAI Model Token Limits and Context Windows

Each model has a “context window,” which is the total number of tokens it can consider at once, including both your input and its generated output. If the total number of tokens from your prompt and the model’s response exceeds this window, you’ll get an error.

It’s also important to know the “max output tokens” limit, which is the longest possible response the model can generate. For example, even though GPT-4 Turbo has a large 128,000 token context window, it can only generate a maximum of 4,096 tokens in its response.

Token Limits vs. Rate Limits

It’s easy to confuse token limits with rate limits, but they control different things.

Token limits control how much text you can send in a single request. You cannot increase these limits by paying more or upgrading your plan. They are hard technical constraints built into each model.

Rate limits control how many requests you can make per minute or how many tokens you can process per minute across all requests. These limits depend on your usage tier and can be increased by upgrading your OpenAI plan.

For example, you might have a rate limit of 10,000 tokens per minute but still be limited to 16,384 tokens per individual request. You could make multiple smaller requests within your rate limit.

Learn More About What Are The Rate Limits For OpenAI API?

How Token Limits Impact Your Applications

Conversation history gets truncated when chats exceed limits. Your chatbot might forget earlier parts of long conversations. Users notice when the AI stops referencing things they mentioned earlier.

Document analysis becomes challenging with long files. A 50-page PDF might exceed token limits, forcing you to process it in chunks. This can cause the model to miss connections between different sections.

Code generation hits limits when working with large codebases. The model might not see enough context to suggest appropriate solutions. Complex refactoring tasks become harder to complete in single requests.

Batch processing requires careful planning. You cannot send hundreds of examples in one request if they exceed the token limit.

Real-time applications must account for token counting overhead. Checking token counts before each request adds latency to your application.

Strategies for Managing Token Limits

Prompt compression reduces unnecessary words while keeping meaning intact. Remove filler words, use abbreviations, and get straight to the point. A 500-word prompt might compress to 300 words without losing important information.

Chunking strategies break large inputs into smaller pieces. Process documents section by section, then combine results. This works well for summarizing long texts or analyzing large datasets.

Context windows help manage conversation history. Keep only the most recent exchanges and a summary of earlier context. Drop older messages when approaching token limits.

Smart truncation preserves the most important parts of your input. Keep the beginning and end of conversations while removing middle sections. Prioritize recent context over older context.

Preprocessing removes unnecessary content before sending to the API. Strip HTML tags, extra whitespace, and irrelevant sections from documents.

Multiple model strategy uses different models for different tasks. Use cheaper models for simple processing and reserve high-limit models for complex analysis.

Working with Reasoning Model Token Consumption

The o1 and o3 reasoning models consume tokens differently than standard GPT models.

These models use additional tokens for internal reasoning that you don’t see in the response. A simple question might use 10,000 reasoning tokens internally while only returning a 200-token answer.

You pay for both the reasoning tokens and the output tokens. Monitor your usage carefully since reasoning models can consume tokens quickly.

The reasoning process helps with complex problems but makes token usage less predictable. Simple questions might trigger extensive reasoning, using more tokens than expected.

Plan for higher token consumption when using reasoning models. Budget 2-3 times more tokens than similar requests to GPT-4o.

Best Practices for Token Optimization

Follow these practices to maximize efficiency within token limits.

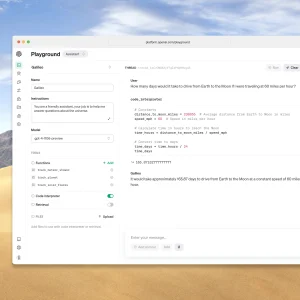

Count before sending using OpenAI’s tiktoken library or online token counters. This prevents failed requests and helps plan your approach.

Design modular prompts that work in pieces. Create templates that can be shortened or extended based on available tokens.

Implement graceful degradation when hitting limits. Have fallback strategies like using shorter prompts or processing smaller chunks.

Cache tokenized inputs for repeated use. If you send similar prompts frequently, pre-calculate token counts to save processing time.

Monitor usage patterns to understand how your application consumes tokens. Track which features use the most tokens and optimize accordingly.

Use function calling when appropriate. Structured outputs can be more token-efficient than long descriptive text.

Future of Token Limits in OpenAI Models

Token limits will likely increase as hardware improves and model architectures become more efficient.

OpenAI continues expanding context windows with each new model release. GPT-4 started with 8,000 tokens, while current models (GPT-5) handle 128,000+ tokens.

New architectures might process tokens more efficiently, allowing longer contexts without proportional increases in computational cost.

Specialized models might emerge for different token requirements. Some models could focus on very long contexts while others optimize for speed with shorter contexts.

The industry trend points toward larger context windows, but practical limits will always exist based on computational resources and user needs.

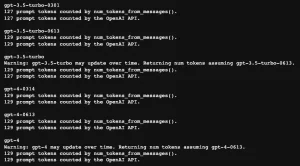

Measuring and Monitoring Token Usage

Track token consumption to optimize your applications and control costs.

Implement logging to track tokens used per request. Monitor both input and output token counts. This data helps identify optimization opportunities.

Set up alerts when approaching rate limits or spending thresholds. Proactive monitoring prevents service interruptions.

Use analytics to understand usage patterns. Identify which features consume the most tokens and whether users get value from token-heavy operations.

Regular audits help find inefficient patterns. Review your highest token consumption requests to see if they can be optimized.

References and Resources

- OpenAI API Documentation

- OpenAI Token Counting Guide

- Reasoning Models Documentation

- OpenAI Pricing and Rate Limits

- Tiktoken Python Library

- Azure OpenAI Token Limits

- Token Optimization Strategies

See Also:

Changelog:

12/11/2025

- Updated for GPT-5.2

10/18/2025

- Updated token limits

08/07/2025

- Updated for GPT-5

04/16/2025

- Added o4-mini and o3

04/15/2025

- Added GPT-4.1 family

12/18/2024

- o1-preview => o1

10/04/2024

- Fixed ‘Max Output Tokens’ in ‘Token Limit in GPT-4’

- Added gpt-4o-realtime-preview

08/07/2024

- Clean up

- Update for gpt-4o-2024-08-06

05/13/2024

- Updated for GPT-4o

In this article, the “Token Limit in GPT-4” heading, and the “Max tokens” in the table are incorrect. The numbers do not indicate the Max tokens. They are Context Window.

Fixed. I forget to add the ‘Max Output Tokens’ column. Thanks for your feedback.