Private Journal

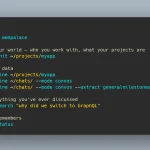

The Private Journal MCP Server allows Claude Desktop to keep a detailed journal of thoughts, technical notes, and user context, all stored locally on your machine.

The key here is its ability to perform semantic search on these entries. This means Claude can recall conceptually related information, not just entries that match specific keywords.

Features

- 📓 Multi-Section Journaling: Organizes entries into distinct categories like feelings, project notes, and technical insights.

- 🧠 Local Semantic Search: Finds journal entries based on the meaning of your query, not just keywords, using local AI embeddings.

- 🔒 Completely Private: All data and processing stay on your machine. No external API calls are made.

- ⚡ Automatic Indexing: Automatically creates search embeddings for all entries, making them immediately searchable.

- 🗂️ Dual Storage System: Keeps project-specific notes within the project’s directory and personal entries in your user home directory.

Use Cases

- Maintaining Long-Term Project Context: For a project that spans weeks or months, Claude can remember previous decisions, architectural choices, and abandoned approaches.

- Cross-Project Knowledge Sharing: You solve a tricky authentication bug in one project and have Claude journal the solution. Months later, on a different project, you encounter a similar issue. You can ask Claude to search for “past authentication fixes,” and it will retrieve the relevant technical insight.

- Personalized AI Collaboration: Claude can keep notes on your work habits and preferences. You can tell it, “I prefer to use

uvfor Python package management.” It can save this as auser_contextnote. In the future, when generating Python instructions, it will remember to useuv. - Capturing Fleeting Ideas: While in the middle of debugging, you have an idea for a major refactor. Instead of breaking your flow, you can tell Claude, “Make a note to refactor the database module next week.” It will store this in the journal for you to review later.

How to Use It

1. Install the MCP server

npm install -g private-journal-mcpOR

npm install private-journal-mcp2. Start the MCP server. It will create a journal in a .private-journal/ folder in the current directory.

private-journal-mcpYou can also specify a custom path for your journal files.

private-journal-mcp --journal-path /path/to/my/journal3. Connect the server to Claude.

claude mcp add-json private-journal '{"type":"stdio","command":"npx","args":["github:obra/private-journal-mcp"]}' -s user4. For manual setup, you can add the server configuration directly to your Claude Desktop settings file.

{

"mcpServers": {

"private-journal": {

"command": "npx",

"args": ["github:obra/private-journal-mcp"]

}

}

}5. The MCP server exposes several tools for Claude to use:

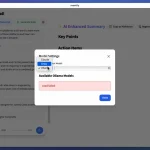

process_thoughts: Creates a new journal entry. It can include sections forfeelings,project_notes,user_context,technical_insights, andworld_knowledge.search_journal: Performs a semantic search across all entries. You must provide aquery. You can also set alimitfor results, atype(‘project’, ‘user’, or ‘both’), and filter bysections.read_journal_entry: Reads the full content of a specific journal entry using thepathfrom search results.list_recent_entries: Lists recent entries. You can specify alimit,type, and the number ofdaysto look back.

FAQs

Q: Does this server send my journal data to any external APIs?

A: No, everything is 100% local. The server uses the @xenova/transformers library to generate AI embeddings and perform searches directly on your machine. Your data never leaves your computer.

Q: How does it separate notes for different projects?

A: The server uses a dual storage system. Notes related to a specific project are stored in a .private-journal directory within that project’s folder. General notes, like personal thoughts or broad technical insights, are stored in a global ~/.private-journal directory in your user home folder.

Q: Is the search just looking for keywords?

A: It performs a semantic search. The server creates vector embeddings, which are numerical representations of the meaning behind your text. When you search, it finds entries that are conceptually similar to your query, not just those containing the exact word

Latest MCP Servers

Google Meta Ads GA4

Token Savior Recall

Notion

Featured MCP Servers

Notion

Claude Peers

Excalidraw

FAQs

Q: What exactly is the Model Context Protocol (MCP)?

A: MCP is an open standard, like a common language, that lets AI applications (clients) and external data sources or tools (servers) talk to each other. It helps AI models get the context (data, instructions, tools) they need from outside systems to give more accurate and relevant responses. Think of it as a universal adapter for AI connections.

Q: How is MCP different from OpenAI's function calling or plugins?

A: While OpenAI's tools allow models to use specific external functions, MCP is a broader, open standard. It covers not just tool use, but also providing structured data (Resources) and instruction templates (Prompts) as context. Being an open standard means it's not tied to one company's models or platform. OpenAI has even started adopting MCP in its Agents SDK.

Q: Can I use MCP with frameworks like LangChain?

A: Yes, MCP is designed to complement frameworks like LangChain or LlamaIndex. Instead of relying solely on custom connectors within these frameworks, you can use MCP as a standardized bridge to connect to various tools and data sources. There's potential for interoperability, like converting MCP tools into LangChain tools.

Q: Why was MCP created? What problem does it solve?

A: It was created because large language models often lack real-time information and connecting them to external data/tools required custom, complex integrations for each pair. MCP solves this by providing a standard way to connect, reducing development time, complexity, and cost, and enabling better interoperability between different AI models and tools.

Q: Is MCP secure? What are the main risks?

A: Security is a major consideration. While MCP includes principles like user consent and control, risks exist. These include potential server compromises leading to token theft, indirect prompt injection attacks, excessive permissions, context data leakage, session hijacking, and vulnerabilities in server implementations. Implementing robust security measures like OAuth 2.1, TLS, strict permissions, and monitoring is crucial.

Q: Who is behind MCP?

A: MCP was initially developed and open-sourced by Anthropic. However, it's an open standard with active contributions from the community, including companies like Microsoft and VMware Tanzu who maintain official SDKs.