Cloudflare

The Cloudflare MCP Server provides programmatic access to the complete Cloudflare API through the Model Context Protocol.

The official Cloudflare OpenAPI specification contains 2.3 million tokens in JSON format, which creates severe context window problems for AI agents.

The MCP server implements a code execution pattern that keeps the massive API specification on the server side while returning only the relevant search results and API responses to the agent.

Features

- 🔍 Intelligent Endpoint Search: Query the complete Cloudflare API specification using JavaScript code.

- ⚡ Code Execution Pattern: Execute API calls through isolated worker environments that handle the request/response cycle server-side

- 🔑 Dual Token Support: Use either user-level tokens with multi-account access or account-scoped tokens with automatic account ID detection

- 📊 Response Truncation: Automatic truncation of API responses to 10,000 tokens to prevent context overflow

- 🌐 Complete Product Coverage: Access all Cloudflare products and services through a unified interface backed by official API schemas

- 💾 Minimal Context Usage: Search results typically consume around 500 tokens compared to 43,000+ tokens for endpoint summaries

How to Use It

Table Of Contents

Creating API Credentials

Generate a Cloudflare API token at https://dash.cloudflare.com/profile/api-tokens with the permissions your automation requires. The server accepts two token types with different scoping behaviors.

User tokens operate at the user level and grant access to multiple accounts. Each API call executed with a user token requires an explicit account_id parameter to identify which account the operation targets.

Account tokens scope to a single Cloudflare account. Include the Account Resources: Read permission when creating an account token so the server can auto-detect your account ID. API calls executed with account tokens do not require the account_id parameter since the token itself carries that information.

Configuring Claude Code

Set your Cloudflare API token as an environment variable in your shell:

export CLOUDFLARE_API_TOKEN="your-token-here"Add the server to Claude Code with the HTTP transport:

claude mcp add --transport http cloudflare-api https://cloudflare-mcp.mattzcarey.workers.dev/mcp \

--header "Authorization: Bearer $CLOUDFLARE_API_TOKEN"The server authenticates all requests using the Bearer token in the Authorization header.

Configuring OpenCode

Export your API token to make it available to the configuration:

export CLOUDFLARE_API_TOKEN="your-token-here"Add this configuration block to your opencode.json file:

{

"mcp": {

"cloudflare-api": {

"type": "remote",

"url": "https://cloudflare-mcp.mattzcarey.workers.dev/mcp",

"headers": {

"Authorization": "Bearer {env:CLOUDFLARE_API_TOKEN}"

}

}

}

}The configuration references the environment variable through the {env:CLOUDFLARE_API_TOKEN} syntax.

Available Tools

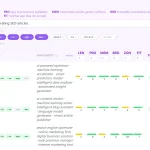

search: This tool accepts JavaScript code that queries the spec.paths object containing the complete OpenAPI specification. The code you provide runs on the server and returns matching endpoints with their HTTP methods, paths, and summaries. A typical search operation looks like this:

search({

code: `async () => {

const results = [];

for (const [path, methods] of Object.entries(spec.paths)) {

for (const [method, op] of Object.entries(methods)) {

if (op.tags?.some(t => t.toLowerCase() === 'workers')) {

results.push({ method: method.toUpperCase(), path, summary: op.summary });

}

}

}

return results;

}`,

});The search results contain only the endpoint metadata you need, not the entire specification.

execute: This tool accepts JavaScript code that calls cloudflare.request() with the endpoint details discovered through search. The code runs in an isolated worker environment with access to the Cloudflare API client. For user tokens, you must provide the account_id parameter. For account tokens, the server injects the account ID automatically.

User token execution example:

execute({

code: `async () => {

const response = await cloudflare.request({

method: "GET",

path: \`/accounts/\${accountId}/workers/scripts\`

});

return response.result;

}`,

account_id: "your-account-id",

});Account token execution example:

execute({

code: `async () => {

const response = await cloudflare.request({

method: "GET",

path: \`/accounts/\${accountId}/workers/scripts\`

});

return response.result;

}`,

});The accountId variable gets populated automatically based on your token type and provided parameters.

Working with the Agent

Once configured, you can interact with the server by giving natural language instructions to your agent. The agent translates these into appropriate search and execute calls.

List all Workers in your account:

List all my WorkersCreate a new KV namespace:

Create a KV namespace called 'my-cache'Add a DNS record:

Add an A record for api.example.com pointing to 192.0.2.1The agent handles the endpoint discovery and API call construction. You receive only the relevant response data without seeing the intermediate search operations or full API specification.

FAQs

Q: Can I use this server with multiple Cloudflare accounts?

A: Yes. User-level API tokens support multi-account access. You must provide the account_id parameter on each execute call to specify which account the operation targets. Account-level tokens restrict access to a single account but eliminate the need for account_id parameters since the token itself carries that scope.

Q: What happens if my API response exceeds 10,000 tokens?

A: The server truncates responses at 10,000 tokens and appends a truncation notice. Refine your API calls to request smaller data sets or add filtering parameters. For example, instead of listing all DNS records, filter by type or name pattern to reduce the result size.

Q: How do I find the right API endpoint for my task?

A: Use the search tool with JavaScript code that queries the spec.paths object. Filter by tags, path patterns, or operation summaries to narrow results. The agent typically handles this automatically when you describe your task in natural language, but you can also write explicit search queries if needed.

Q: Does the server support all Cloudflare API operations?

A: Yes. The server uses the complete official Cloudflare API schemas covering Workers, KV, R2, D1, Pages, DNS, Firewall, Load Balancers, Stream, Images, AI Gateway, Vectorize, Access, Gateway, and all other products. Any operation documented in the Cloudflare API works through this server.

Q: Can I use this in production automation?

A: The server runs on Cloudflare Workers and handles isolated code execution for each request. Review your API token permissions carefully to limit access to only the operations your automation requires.

Q: What permissions does my API token need?

A: Token permissions depend on which Cloudflare resources you need to access. For account tokens, include Account Resources: Read so the server can auto-detect your account ID. Add specific permissions for each product you want to manage through the API.

Latest MCP Servers

Notion

Log Mcp

Apple

Featured MCP Servers

Notion

Claude Peers

Excalidraw

FAQs

Q: What exactly is the Model Context Protocol (MCP)?

A: MCP is an open standard, like a common language, that lets AI applications (clients) and external data sources or tools (servers) talk to each other. It helps AI models get the context (data, instructions, tools) they need from outside systems to give more accurate and relevant responses. Think of it as a universal adapter for AI connections.

Q: How is MCP different from OpenAI's function calling or plugins?

A: While OpenAI's tools allow models to use specific external functions, MCP is a broader, open standard. It covers not just tool use, but also providing structured data (Resources) and instruction templates (Prompts) as context. Being an open standard means it's not tied to one company's models or platform. OpenAI has even started adopting MCP in its Agents SDK.

Q: Can I use MCP with frameworks like LangChain?

A: Yes, MCP is designed to complement frameworks like LangChain or LlamaIndex. Instead of relying solely on custom connectors within these frameworks, you can use MCP as a standardized bridge to connect to various tools and data sources. There's potential for interoperability, like converting MCP tools into LangChain tools.

Q: Why was MCP created? What problem does it solve?

A: It was created because large language models often lack real-time information and connecting them to external data/tools required custom, complex integrations for each pair. MCP solves this by providing a standard way to connect, reducing development time, complexity, and cost, and enabling better interoperability between different AI models and tools.

Q: Is MCP secure? What are the main risks?

A: Security is a major consideration. While MCP includes principles like user consent and control, risks exist. These include potential server compromises leading to token theft, indirect prompt injection attacks, excessive permissions, context data leakage, session hijacking, and vulnerabilities in server implementations. Implementing robust security measures like OAuth 2.1, TLS, strict permissions, and monitoring is crucial.

Q: Who is behind MCP?

A: MCP was initially developed and open-sourced by Anthropic. However, it's an open standard with active contributions from the community, including companies like Microsoft and VMware Tanzu who maintain official SDKs.