o3 Web Search

o3-search-mcp is an MPC server that connects Claude (or any other MCP clients) to OpenAI’s o3 model to perform live web searches.

Features

- 🔍 AI-Enhanced Search Results – Leverages OpenAI’s o3 model for intelligent query processing and result ranking

- ⚙️ Configurable Context Size – Adjustable search context levels (low, medium, high) to match your processing requirements

- 🧠 Reasoning Effort Control – Tunable reasoning depth for balancing speed vs. thoroughness in search analysis

- 📦 Zero-Setup Installation – Available through npx for instant deployment without local dependencies

Use Cases

- Real-time Fact-Checking: You’re working on a document and need to verify a statistic or a recent event. Instead of breaking your flow to open a browser, you can ask Claude to use

o3-searchto get the latest information. - Topical Research: You need a summary of recent developments in a specific field, like the latest features in a programming language or news about a financial market. This server lets Claude gather up-to-the-minute information that isn’t in its training data.

- Developer Troubleshooting: You’re stuck on a coding problem with a new library. You can ask Claude to search for recent solutions, documentation, or relevant GitHub issues, getting current answers that might not have existed when the model was last trained.

Using NPX (Recommended)

This is the quickest way to get started. You don’t need to download any code. Just run the following command in your terminal to add the server to Claude’s user-level configuration:

Claude Code:

$ claude mcp add o3 -s user \

-e OPENAI_API_KEY=your-api-key \

-e SEARCH_CONTEXT_SIZE=medium \

-e REASONING_EFFORT=medium \

-- npx o3-search-mcpThis command tells the Claude CLI to add a new MCP server named o3. The -s user flag saves this configuration for your user account. The -e flags set the necessary environment variables.

Alternatively, you can add the configuration directly to your JSON file:

JSON Configuration:

{

"mcpServers": {

"o3-search": {

"command": "npx",

"args": ["o3-search-mcp"],

"env": {

"OPENAI_API_KEY": "your-api-key",

"SEARCH_CONTEXT_SIZE": "medium",

"REASONING_EFFORT": "medium"

}

}

}

}Local Development Setup

1. Clone and build the project:

git clone [email protected]:yoshiko-pg/o3-search-mcp.git

cd o3-search-mcp

pnpm install

pnpm build2. Add the local server to Claude. Make sure to replace /path/to/o3-search-mcp/build/index.js with the actual path to the built file on your machine.

Claude Code:

$ claude mcp add o3 -s user \

-e OPENAI_API_KEY=your-api-key \

-e SEARCH_CONTEXT_SIZE=medium \

-e REASONING_EFFORT=medium \

-- node /path/to/o3-search-mcp/build/index.jsJSON Configuration:

{

"mcpServers": {

"o3-search": {

"command": "node",

"args": ["/path/to/o3-search-mcp/build/index.js"],

"env": {

"OPENAI_API_KEY": "your-api-key",

"SEARCH_CONTEXT_SIZE": "medium",

"REASONING_EFFORT": "medium"

}

}

}

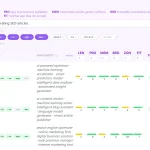

}Configuration Options

OPENAI_API_KEY: (Required) Your secret API key from OpenAI.SEARCH_CONTEXT_SIZE: (Optional) Controls the amount of data retrieved. Acceptslow,medium, orhigh. Defaults tomedium.REASONING_EFFORT: (Optional) Determines how much computational effort the model uses. Acceptslow,medium, orhigh. Higher values can lead to more accurate or detailed results but may take longer. Defaults tomedium.

FAQs

Q: What’s the practical difference between SEARCH_CONTEXT_SIZE and REASONING_EFFORT?

A: Think of it this way: SEARCH_CONTEXT_SIZE is about the quantity of raw material the model gathers from the web. REASONING_EFFORT is about the quality of thinking it does with that material. A high context size with low reasoning might give you a lot of lightly processed information, while low context and high reasoning would involve deep analysis of a smaller set of data.

Q: Do I need to install anything locally to use this MCP server?

A: No, the npx method handles everything automatically. You just need an OpenAI API key and Claude Code or proper MCP configuration.

Q: What’s the difference between the context size settings?

A: Low provides faster results with basic context, medium balances speed and depth, while high gives comprehensive analysis but takes longer to process.

Q: How does reasoning effort affect search quality?

A: Higher reasoning effort means the AI spends more time analyzing and connecting concepts in your query, leading to more nuanced and contextually appropriate results.

Latest MCP Servers

Notion

Log Mcp

Apple

Featured MCP Servers

Notion

Claude Peers

Excalidraw

FAQs

Q: What exactly is the Model Context Protocol (MCP)?

A: MCP is an open standard, like a common language, that lets AI applications (clients) and external data sources or tools (servers) talk to each other. It helps AI models get the context (data, instructions, tools) they need from outside systems to give more accurate and relevant responses. Think of it as a universal adapter for AI connections.

Q: How is MCP different from OpenAI's function calling or plugins?

A: While OpenAI's tools allow models to use specific external functions, MCP is a broader, open standard. It covers not just tool use, but also providing structured data (Resources) and instruction templates (Prompts) as context. Being an open standard means it's not tied to one company's models or platform. OpenAI has even started adopting MCP in its Agents SDK.

Q: Can I use MCP with frameworks like LangChain?

A: Yes, MCP is designed to complement frameworks like LangChain or LlamaIndex. Instead of relying solely on custom connectors within these frameworks, you can use MCP as a standardized bridge to connect to various tools and data sources. There's potential for interoperability, like converting MCP tools into LangChain tools.

Q: Why was MCP created? What problem does it solve?

A: It was created because large language models often lack real-time information and connecting them to external data/tools required custom, complex integrations for each pair. MCP solves this by providing a standard way to connect, reducing development time, complexity, and cost, and enabling better interoperability between different AI models and tools.

Q: Is MCP secure? What are the main risks?

A: Security is a major consideration. While MCP includes principles like user consent and control, risks exist. These include potential server compromises leading to token theft, indirect prompt injection attacks, excessive permissions, context data leakage, session hijacking, and vulnerabilities in server implementations. Implementing robust security measures like OAuth 2.1, TLS, strict permissions, and monitoring is crucial.

Q: Who is behind MCP?

A: MCP was initially developed and open-sourced by Anthropic. However, it's an open standard with active contributions from the community, including companies like Microsoft and VMware Tanzu who maintain official SDKs.