Jina AI

The Jina AI Remote MCP Server gives developers direct access to Jina’s powerful suite of AI APIs like Reader API, Embeddings API, and Reranker API.

It handles the heavy lifting of converting web content into clean, structured data that LLMs can actually work with. No more wrestling with messy HTML or getting blocked by anti-scraping measures.

Features

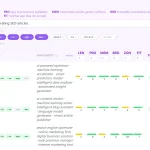

- 🔍 Web Content Extraction – Pull clean markdown content from any URL with

read_url, stripping away navigation menus, ads, and other noise that clutters web pages - 📸 Screenshot Capture – Generate high-quality screenshots of web pages through

capture_screenshot_urlwithout setting up your own browser automation - 🌐 Web Search – Access current information via

search_webthat returns the top results in LLM-friendly format—no need to parse search engine HTML - 📚 Academic Research – Find relevant papers using

search_arxivspecifically designed for academic content - 🖼️ Image Search – Locate relevant images across the web with

search_images, similar to Google Images but programmatically accessible - 🔄 Query Expansion – Improve search results with

expand_querythat rewrites and diversifies your search terms using submodular optimization - 🎯 Document Reranking – Boost search relevance with

sort_by_relevancethat applies Jina’s world-class reranking model to your results - 🧹 Content Deduplication – Eliminate redundant content using

deduplicate_stringsanddeduplicate_imagesthat identify semantically similar items

How To Use It

1. If your development environment supports remote MCP servers (like Cursor or LM Studio), add this configuration:

{

"mcpServers": {

"jina-mcp-server": {

"url": "https://mcp.jina.ai/sse",

"headers": {

"Authorization": "Bearer ${JINA_API_KEY}"

}

}

}

}Replace ${JINA_API_KEY} with your free API key from jina.ai. For tools that don’t require authentication (like basic read_url), you can omit the Authorization header.

2. If your environment doesn’t yet support remote MCP servers, use the mcp-remote proxy:

{

"mcpServers": {

"jina-mcp-server": {

"command": "npx",

"args": [

"mcp-remote",

"https://mcp.jina.ai/sse",

"--header",

"Authorization: Bearer ${JINA_API_KEY}"

]

}

}

}This requires Node.js and npm installed on your system.

3. When using the tools, be aware of context window limitations. Some users report getting stuck in tool-calling loops when using thinking models with the default 4096 context window.

The solution? Load your model with a larger context size to accommodate the full tool-calling chain and thought process.

4. For developers who want to customize or self-host, Jina provides a GitHub repository you can clone and deploy:

git clone https://github.com/jina-ai/MCP.git

cd MCP

npm install

npm run start5. You can also deploy directly to Cloudflare Workers with one click, creating your own instance at jina-mcp-server.<your-account>.workers.dev/sse.

FAQs

Q: Do I need an API key for all features?

A: No. Basic content extraction (read_url) and screenshot capture work without authentication but have lower rate limits. Web search, arXiv search, image search, query expansion, and deduplication tools require a Jina API key. You can get a free key with generous usage limits from their website.

Q: How does the deduplication actually work?

A: The deduplicate_strings and deduplicate_images tools use Jina’s Embeddings API to create vector representations of your content, then apply submodular optimization to select the most diverse yet relevant items. It’s smarter than simple string matching—it identifies semantically similar content even when wording differs.

Q: I’m hitting rate limits with the free tier. What are my options?

A: Free API keys come with 10 million tokens. If you exceed this, you can either wait for the next billing period (limits reset monthly) or upgrade to a premium key for higher limits. For production applications with heavy usage, contact Jina about enterprise plans.

Q: Why do I get stuck in tool-calling loops sometimes?

A: This happens when your model’s context window fills up during extended tool usage. Thinking models like gpt-oss-120b generate many intermediate steps. Increase your context window size—4096 is often too small for complex tool chains. Set it to at least 8K or 16K for smoother operation.

Q: How does this compare to building my own scraping solution?

A: You’d need to handle browser automation, proxy rotation, anti-bot bypass, HTML parsing, and content extraction—plus ongoing maintenance as websites change. This server handles all that infrastructure for you, with better results and less development time. The token cost often works out cheaper than the engineering hours required.

Latest MCP Servers

Notion

Log Mcp

Apple

Featured MCP Servers

Notion

Claude Peers

Excalidraw

FAQs

Q: What exactly is the Model Context Protocol (MCP)?

A: MCP is an open standard, like a common language, that lets AI applications (clients) and external data sources or tools (servers) talk to each other. It helps AI models get the context (data, instructions, tools) they need from outside systems to give more accurate and relevant responses. Think of it as a universal adapter for AI connections.

Q: How is MCP different from OpenAI's function calling or plugins?

A: While OpenAI's tools allow models to use specific external functions, MCP is a broader, open standard. It covers not just tool use, but also providing structured data (Resources) and instruction templates (Prompts) as context. Being an open standard means it's not tied to one company's models or platform. OpenAI has even started adopting MCP in its Agents SDK.

Q: Can I use MCP with frameworks like LangChain?

A: Yes, MCP is designed to complement frameworks like LangChain or LlamaIndex. Instead of relying solely on custom connectors within these frameworks, you can use MCP as a standardized bridge to connect to various tools and data sources. There's potential for interoperability, like converting MCP tools into LangChain tools.

Q: Why was MCP created? What problem does it solve?

A: It was created because large language models often lack real-time information and connecting them to external data/tools required custom, complex integrations for each pair. MCP solves this by providing a standard way to connect, reducing development time, complexity, and cost, and enabling better interoperability between different AI models and tools.

Q: Is MCP secure? What are the main risks?

A: Security is a major consideration. While MCP includes principles like user consent and control, risks exist. These include potential server compromises leading to token theft, indirect prompt injection attacks, excessive permissions, context data leakage, session hijacking, and vulnerabilities in server implementations. Implementing robust security measures like OAuth 2.1, TLS, strict permissions, and monitoring is crucial.

Q: Who is behind MCP?

A: MCP was initially developed and open-sourced by Anthropic. However, it's an open standard with active contributions from the community, including companies like Microsoft and VMware Tanzu who maintain official SDKs.