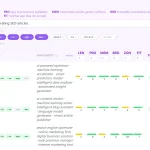

Context Optimizer

AI assistants have a finite memory, and when you flood them with hundreds of lines from a terminal or a massive file, they start to buckle. They either truncate the history, or their reasoning quality takes a nosedive. This is where the Context Optimizer MCP Server comes in.

The Context Optimizer MCP Server acts as a smart filter for your AI assistant. Instead of dumping entire files or logs into the chat, this server gives the assistant tools to pull out only the specific information it needs.

Features

- 🔍 Precise File Analysis: The

askAboutFiletool lets your assistant ask specific questions about a file—like “Does this file contain a function named ‘calculate_pi’?”—without needing to read the whole thing. - 🖥️ Smart Terminal Execution: With

runAndExtract, you can run a command and have an LLM analyze the output to pull out only the relevant bits. No more context window flooding from verbose logs. - ❓Intelligent Follow-ups: The

askFollowUptool maintains the context of previous terminal commands, so you can ask subsequent questions without re-running anything. - 🔬 Powerful Web Research: It integrates with Exa.ai, giving your assistant

researchTopicanddeepResearchtools to conduct focused web searches for up-to-date information. - 🔧 Broad Compatibility: It works with multiple LLMs, including Google Gemini, Claude, and OpenAI models.

- 🔒 Built-in Security: Includes essential security controls like path validation and command filtering to keep things safe.

Use Cases

- Debugging CI/CD Pipelines: Instead of pasting a massive, failed build log into your chat, you can use

runAndExtractto have the assistant pinpoint the exact error message and suggest a fix. - Auditing Large Codebases: When you need to know if a specific dependency or deprecated function exists across dozens of files,

askAboutFilecan check for you without you having to open each one or send the entire codebase to the AI. - Learning a New Framework: You can use the

researchTopictool to get a quick summary of current best practices for a new technology, complete with citations, directly in your coding environment. - Analyzing Command-Line Tool Output: If you’re working with a CLI tool that produces a lot of output (like

git diffornpm list),runAndExtractcan parse it and give you just the information you asked for, like a list of changed files or a specific package version.

How to Use It

1. Install the server globally using npm:

npm install -g context-optimizer-mcp-server2. Set up environment variables. The server needs to know which LLM provider you’re using and the corresponding API key. It also requires an API key for Exa.ai for the research tools and a list of allowed paths for security.

For example, on macOS or Linux, you would add this to your .zshrc or .bashrc file:

export CONTEXT_OPT_LLM_PROVIDER="gemini"

export CONTEXT_OPT_GEMINI_KEY="your-gemini-api-key"

export CONTEXT_OPT_EXA_KEY="your-exa-api-key"

export CONTEXT_OPT_ALLOWED_PATHS="/path/to/your/projects"3. Add the server to your MCP client’s configuration file. For an AI assistant like Claude Desktop, you would edit the claude_desktop_config.json file. For VS Code, you’d modify .vscode/mcp.json.

"context-optimizer": {

"command": "context-optimizer-mcp"

}4. Available tools

deepResearch: Performs in-depth, comprehensive research using Exa.ai for more complex planning and critical architectural decisions.askAboutFile: Asks specific questions about a file’s contents without loading the entire file into the chat. Use it to check for functions, read imports, or understand a file’s purpose.runAndExtract: Runs a terminal command and uses an LLM to pull out only the important information from the output, keeping your context clean.askFollowUp: Lets you ask more questions about the results of a previousrunAndExtractcommand without having to run it again.researchTopic: Conducts a quick and focused web search using Exa.ai to get up-to-date answers on development topics.

FAQs

Q: Do I need a powerful machine to run this?

A: No, the server itself is lightweight. The heavy lifting (LLM analysis) is done by the API of the provider you configure (like Gemini, Claude, or OpenAI), so it doesn’t strain your local machine.

Q: What’s the difference between researchTopic and deepResearch?

A: researchTopic is for quick, focused questions to get up-to-date information or best practices. deepResearch uses Exa.ai’s more exhaustive capabilities for more complex, strategic research where you need a comprehensive analysis.

Q: Can I use this with any AI assistant?

A: It works with any AI assistant that supports the Model Context Protocol (MCP). This includes popular tools like Cursor, Claude Desktop, and GitHub Copilot (when used within an MCP-compatible environment like VS Code).

Latest MCP Servers

Notion

Log Mcp

Apple

Featured MCP Servers

Notion

Claude Peers

Excalidraw

FAQs

Q: What exactly is the Model Context Protocol (MCP)?

A: MCP is an open standard, like a common language, that lets AI applications (clients) and external data sources or tools (servers) talk to each other. It helps AI models get the context (data, instructions, tools) they need from outside systems to give more accurate and relevant responses. Think of it as a universal adapter for AI connections.

Q: How is MCP different from OpenAI's function calling or plugins?

A: While OpenAI's tools allow models to use specific external functions, MCP is a broader, open standard. It covers not just tool use, but also providing structured data (Resources) and instruction templates (Prompts) as context. Being an open standard means it's not tied to one company's models or platform. OpenAI has even started adopting MCP in its Agents SDK.

Q: Can I use MCP with frameworks like LangChain?

A: Yes, MCP is designed to complement frameworks like LangChain or LlamaIndex. Instead of relying solely on custom connectors within these frameworks, you can use MCP as a standardized bridge to connect to various tools and data sources. There's potential for interoperability, like converting MCP tools into LangChain tools.

Q: Why was MCP created? What problem does it solve?

A: It was created because large language models often lack real-time information and connecting them to external data/tools required custom, complex integrations for each pair. MCP solves this by providing a standard way to connect, reducing development time, complexity, and cost, and enabling better interoperability between different AI models and tools.

Q: Is MCP secure? What are the main risks?

A: Security is a major consideration. While MCP includes principles like user consent and control, risks exist. These include potential server compromises leading to token theft, indirect prompt injection attacks, excessive permissions, context data leakage, session hijacking, and vulnerabilities in server implementations. Implementing robust security measures like OAuth 2.1, TLS, strict permissions, and monitoring is crucial.

Q: Who is behind MCP?

A: MCP was initially developed and open-sourced by Anthropic. However, it's an open standard with active contributions from the community, including companies like Microsoft and VMware Tanzu who maintain official SDKs.